NLP | Natural Language Processing Tutorial

Natural Language Processing (NLP) Tutorial

- Introduction of NLP

- Need of Natural Language Processing (NLP)

- History of NLP

- How does NLP work?

- Advantages of NLP

- Disadvantages of NLP

- Components of NLP

- Application of NLP

- Phases of NLP

- Future of NLP

- NLP with Python

- Why use Python for NLP

- NLP libraries

- NLP APIs

- NLP vs Machine Learning

What is NLP?

Natural Language Processing is a method of a computer program to understand human language, which is either written or spoken. It is the component of Artificial Intelligence that enables the computer to understand the human language process. The main purpose of NLP is to read and understand the human language and deliver the output accordingly.

It is a complex task for the machine to understand how we communicate. Computers need structured data, but human speaks randomly and use words that may have more than one meaning. So, it is very challenging task to develop NLP applications.

NLP tries to extract information from spoken and written words using algorithms. For example, Google assistant application, it takes questions by humans that can be written or spoken and answers them accordingly.

Need of Natural Language Processing

- It saves time to perform certain tasks like automated text writing and automated speech. It also helps the people who can’t write but can share their queries by speaking.

- A computer can be more useful if it is capable to communicate with human beings.

- As the amount of online data is increasing continuously through various social sites, it is not very easy to fetch valuable information from such a large database. NLP techniques can solve such issues.

- The main use of the NLP application is machine translation, which helps to remove the language barriers.

- Machine translation helps to convert the information from one language to another. For sentiment analysis, the NLP techniques are very useful.

- It also helps non-programmers to interact with the computer system and access information from it.

- A huge amount of data was being wasted, but NLP provided a way to make wasted data to useful information in the past, and also specified a way to useful improvement in businesses.

History of NLP

- Research on NLP began in 1950, after the Booth and Richen investigation, when Alan Turing announced an article titled “Machine and Intelligence.”

- It was tried to automate translation from Russian to English in 1954.

- The first international meeting on machine translation was held in 1952, and the second was in 1956.

- At the beginning of 1961, the work started on the problems of creating data or knowledge base influenced by AI. A BASEBALL question-answering system was also introduced in the same year.

- An advanced system was described in 1968. After comparing this system with the BASEBALL question-answering system, it was recognized and then provided to fulfill the need for inference on the knowledge base to interpret and respond to language input.

- In 1980, the work on the lexicon also supported the grammatico-logical approach.

- In 1990, the data-driven and probabilistic became fully standard.

- In 2000, a huge amount of textual and spoken data became available.

How does NLP work?

NLP extracts the data through various forms of AI, such as neural networks and deep learning models, to check out and utilize patterns in stored data, and to improve the value of results with each new search by the user. Sometimes, when you search something with errors on google and get a response, ‘did you mean……?’ that’s NLP train itself to know the user’s indentation and try to overcome the errors and provide the similar result to the searched user.

The process of NLP can be understood in below steps:

- Read the written text in the human language.

- Translate, it’s meaning using its filters.

- Then, translate it back to provide us what we search.

Advantages of NLP

There are many advantages of natural language processing, some of which are as follows:

- NLP system is very time efficient; it gives a response about any topic within seconds.

- It provides only necessary information related to the subject.

- It gives response to questions in natural language.

- It helps the computer to communicate with a human in their language.

- It constructs a highly unstructured data source.

- It also teaches you how to handle situations in the future.

- Furthermore, NLP has no limit of the benefits it offers.

Disadvantages of NLP

- Sometimes, NLP may not be able to give the correct answer because of ambiguity in the question.

- NLP system is built with limited functions, so it is unable to adapt new discipline and problems.

- NLP lacks user interaction as it does not have the user interface.

- Furthermore, it may be unpredictable.

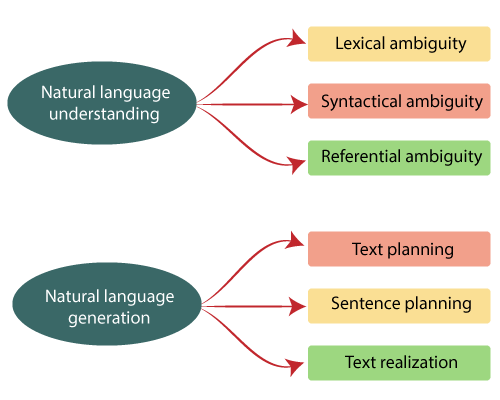

Components of NLP

The components of natural language processing are given below:

- Natural Language Understanding (NLU).

- Lexical ambiguity.

- Syntactical ambiguity.

- Referential ambiguity.

2) Natural Language Generation (NLG).

- Text planning.

- Sentence planning.

- Text realization.

NLU: It stands for Natural Language Understanding. It primarily focuses on machine reading comprehension that enables a computer to comprehend the meaning of a body of the text. It can be used for categorizing the text, collecting news, achieving pieces of text, and analyzing the content.

NLG: It stands for Natural Language Generation. It is the subsection of natural language processing. NLG makes data understandable and tries to automate the writing of data, financial reports, product descriptions, etc.

Difficulties in NLP

- Lexical ambiguity: Lexical ambiguity works on word level. As a single word can have more than one meaning, so it is very difficult for the computer to choose the right meaning. It’s also called semantic ambiguity. Sometimes, it is used to create a joke and other kinds of wordplay. For example, the word “document” is a verb as well as a noun.

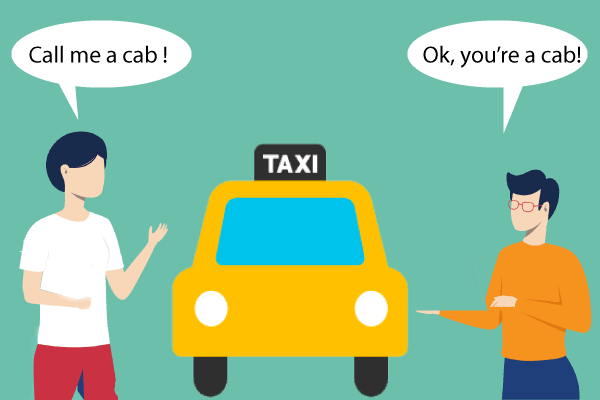

- Syntactical ambiguity: Syntactical ambiguity occurs when a single sentence can have two or more possible meanings. It works on a sentence or sequence of words.

For example: If you say to your friend “call me a cab”, which generally means that you want to call a taxi for going somewhere, but your friend will understand it as if you are asking him to call you a cab.

For a better understanding, look at given below picture.

- Referential ambiguity: A referential ambiguity occurs within a sentence. It causes ambiguity when we use pronouns to refer to something.

For example: “Mohit went to Rohan. He said, I am helpless.” So, in this situation, a human can understand easily that Mohit is helpless, but the computer does not understand to whom “I” is referring Mohit or Rohan.

- Text planning: Text planning refers to fetching the relevant information from the knowledge base.

- Sentence planning: It arranges the words for making a meaningful sentence. For example, I am a boy is a meaningful sentence, but if it says ‘boy a I am,’ it is incorrect.

- Text realization: It corrects the structure of sentences then provides output.

Application of NLP

1) Machine Translation: Machine translation helps to overcome language barriers by translating technical manuals, and supports content at a significantly reduced cost. The machine translation technology is not only for translating the words but also understands the correct meaning of sentences to provide the right translation.

2) Automatic summarization: Automatic summarization helps to fetch the piece of information from a huge amount of data. It is not only for summarizing the data but also for understanding the emotional meanings inside the information like gathering data from social media. It overcomes the redundancy from multiple sources and maximizing the variations of obtained content.

3) Speech recognition: Speech recognition is a part of the computational area that helps to develop the technologies, which enable to recognize and translate the spoken language into text by computers. It is also called automatic speech recognition and speech to text. Speech recognition is used for home automation, mobile telephony, handsfree computing, virtual assistance, video games, etc. The neural networks are very important in this area.

4) Spell checking: NLP also used in spell-checking. The neural network helps to design the tools for detecting misspelled words. These tools or systems are trained based on the particular corrections that a writer makes. With the help of many spell-checking methods, it sorts out several problems related to misspelled words.

5) Sentiment analysis: The purpose of sentiment analysis is to recognize opinion among several records. It also identifies the same post where emotion is not clearly expressed. NLP applications are used in organizations to identify the sentiment or opinion of customers like what customers think about their products and services.

6) Text classification: Text classification is used to assign predefined categories to a document and also used to organize it in a way by which you can find the required information or simplify some activities. For example, an email spam filters.

7) Question answering: The need of NLP is increasing day by day due to speech-recognition technology and voice-input applications improvement. Question answering applications are becoming more and more popular in various fields such as OK Google, virtual assistant, chat boxes. A QA application has the ability to answer a human request. It is the main application of natural language processing research.

8) Information extraction: Information extraction plays a vital role in automatically fetching structured information like entities, the relationship between entities, from unstructured or semi-structured documents.

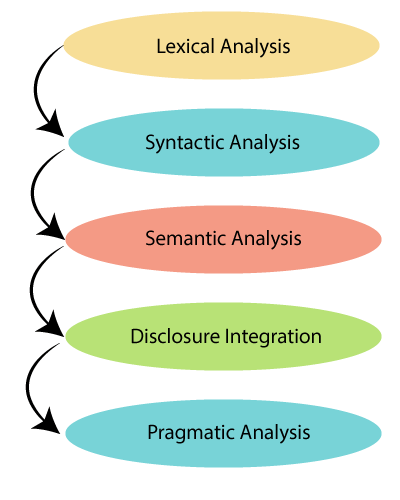

Phases of NLP

The phases or steps of NLP are given below.

- Lexical analysis or Morphological: Lexical means the collection of words and phrases in a language. Lexical analysis breaks the whole chunks of text into words, paragraphs, and sentences. It includes identifying and analyzing the structure of words.

For example, A word like ‘dishonest’ can be broken into ‘dis-honest.’

- Syntactic analysis: Syntactic analysis checks the grammar in a sentence, and it arranges words in a manner that represents the relationship among the words.

For example: Car you a have

The above sentence has no correct meaning, and also it is not correct grammatically. So, this sentence will be rejected by syntactic analysis.

- Semantic analysis: Semantic analysis is used to check the text’s meaningfulness. It extracts the correct meaning form the text.

Examples: Cold coffee, iced tea, etc.

- Disclosure integration: Basically, it quickly describes the right meaning of any correct sentence.

- Pragmatic analysis: In this phase, pragmatic analysis deals with the entire communication and social content, and it provides the actual meaning of what was said in reinterpreted.

For example: ‘Let me water’ is interpreted as a request instead of an order.

Future of NLP

- Future in Business Intelligence - As natural language processing continuously makes data more user-friendly and mostly users will choose NLP-driven platforms. Thus, NLP will remove all current barriers to getting an entry for Big Data Business Intelligence (BI). And one day, business users may join in BI task through conversation with smart chatbots and assistants.

- Use of NLP in business sectors – To continue enhancement in speech recognition technology, the audio-video source will provide huge data analysis. Hence, BI scope will increase in every aspect of the business.

- With the combination of NLP generation, computers will be more capable of receiving and providing necessary information or data.

- With the help of NLP in the future, computers will able to learn from online information and apply that in the real-world, although lots of work is required to be done for that.

- It is widely used in customer service, mostly in sectors like banking, retail, and hospitality, as they help customers to fulfill their queries instantly, including answering their questions and providing relevant information, resources, and products at any time of the day. This type of NLP enhanced functionality will also provide benefits to other kinds of services to make them more effective over time.

NLP with Python

Python is a high-level, interpreted, object-oriented programming language. It is very simple and easy to learn. It supports modules and packages, which boosts program modularity and code reuse. Python is an interactive language, which means we can directly interact with the interpreter to write a program.

Why use Python for NLP:

There are so many features of Python that make it great technology for NLP, such as-

- Easy: Compared to other programming languages like C++ and Java, Python is easy to code and also easy to learn. A beginner can also learn it quickly.

- Interpreted: Asit is an interpreted language, we do not need to compile the program before executing it.

- Portable: Python is not system-dependent as you can run the same code on any OS Windows or MAC, and you do not need to write different code while executing on another machine.

- Object-Oriented: The nature of Python is object-oriented, which makes it easier to write programs and to encapsulate code within objects.

NLP libraries

There are several tools and libraries available to solve NLP problems with Python.

- Natural Language Toolkit (NLTK): NLTK is a powerful library, which includes tasks such as classification, parsing, tagging, semantic reasoning, and tokenization. It is a very important tool for natural language processing and machine learning.

NLTK is developed by Steven Bird and Edward Loper at the University of Pennsylvania.

- TextBlob: TextBlob provides an easy interface for beginners to help them learn basic NLP tasks like opinion analysis, noun phrase extraction, or pos-tagging. It is beneficial for programmers who are starting a project on NLP with Python. It is also useful to design prototypes.

- CoreNLP: This library is written in Java, but it is furnished with wrappers by several languages, including Python. It was developed at Stanford University. The library environment is really fast and works well in product development. Furthermore, some components of CoreNLP can be integrated with NLTK, which helps to increase the efficiency.

- spaCy: spaCy was designed for production usages. Today, spaCy provides the fastest syntactic parser available in the market. Additionally, it is written in Python; that’s why it is speedy and efficient. It is the key library by which the tool might start supporting more programming languages soon.

- Genism: It is a Python library that is especially used to recognize the similarity between two documents by using topic modeling toolkit and vector space modeling. With the help of efficient data running and incremental algorithms, it can manage a large amount of data.

- polyglot: This library is a slightly low level as compared to other libraries. Because it provides a wide range of analysis and influential language coverage. It is also an efficient, straightforward, and perfect choice for NLP projects, and also support languages which spaCy does not support.

- scikit-learn: This library provides a broad range of algorithms to develop machine learning models. It comes with many functions of creating features to handle text classification problems. The inherent class method is the power of this library. Moreover, it has excellent documentation that is beneficial for developers to make more understanding of its features.

- Pattern: It is a web mining module that allows opinion analysis, part-of-speech, vector space modeling, clustering, support vector machine, and wordnet. The Pattern has tools for data mining such as Google, Twitter, Wikipedia API, HTML, web crawler, and DOM parser.

- Vocabulary: Basically, it is a Python library for natural language processing. It is a dictionary in the form of a Python module. It offers meanings, synonyms, part of speech, antonyms, translation, etc., for a given the word.

NLP APIs

Natural language processing APIs help developers to extract and analyze natural language within paragraph and words. It also allows developers to integrate human to machine communication into their applications and perform several tasks like determine sentiment, entities, intent, and speech recognition, etc.

- IBM Watson API: The IBM Watson API enables you to interpret natural language using custom text classifiers. It helps developers to classify text into a variety of custom categories by combining several machine learning techniques. It also allows you to maintain high accuracy with small data, even without having prior knowledge of machine learning. Moreover, it supports various languages, such as English, Arabic, French, Spanish, etc.

Additionally, this API provides simple documentation and useful resources for your application to implement natural language processing and machine learning techniques. IBM Cloud offers to test the IBM Watson API free for 30 days with the IBM cloud account. After that, you can go with its paid plans.

- Speech to text API: This API uses machine learning techniques to enhance the power of converting the speech into written text. It allows you to convert audios into text. Furthermore, it automatically recognizes various accents like the UK, US, and more, so you can implement conversions with more accuracy.

Although you can use this API for free, you are allowed to use it only for 60 minutes per month. To get more benefits, you can use its paid tiers, which start from $500 to $1500 per month.

- Chatbot API: Chatbot API allows you to utilize the latest development in natural language processing technology, and also provides a way to access the power of a neural network and create a flexible chatbot. It offers complex text classification algorithms with 94.2% accuracy and supports multiple languages and Unicode characters. Additionally, you can use it to create a chatbot for your web application.

This API is available for free, but it is restricted to only 150 requests in a month, to use more features of Chatbot API, you can purchase its paid plans, which start from $100 to $5000 per month.

- Google Cloud Natural Language API: The API enables you to influence Google’s machine learning technologies. It performs certain tasks such as opinion analysis, entity recognition, syntax analysis, and data classification in 700+ predefined categories. It also provides a way for text analysis in multiple languages like French, English, Portuguese, German, and Chinese.

Furthermore,the Google Cloud Natural Language API offers fetching important information from text documents such as blog articles or other types of data. You can use it to understand the opinions of customers about your products on web platforms and can also analyze the text uploaded on your web portal as well as can retrieve information from audio conversions.

Its price depends on the number of units you analyze and the features of the API you use. You can process less than 5000 units free in a month. Later on, you have to pay based on the features you want to use. For example: To analysis 5000 to 1000000 units, you have to pay $1.00 per 1000 units in a month.

- Sentiment Analysis API: The Sentiment Analysis API is introduced by Twinword. It uses natural language processing technology to assist you to guess whether a sentence is positive or negative. It also returns score and ratio property that allows you to know the tone of your analyzed text. You can modify the score and ratio values according to your requirements.

The API allows you to integrate it into your application to identify the tone of a user comment or post. For example, if you want to find out feedback about your negative customer so you can use this API and make convenient improvements. It is available for free, but only for less than 500 requests in a month. After that, you will be charged as per the features you want to use, and it starts from $19 to $99 per month.

- NLP API by Turbo: This API is provided by Turbo, which commonly allows you to complete a wide range of tasks of natural language processing. It offers various NLP activities such as text summarization, tokenization, named entity extraction, opinion analysis, and several others.

Furthermore, you can use this API for free but with the limit of 1000 requests in a month. To access more features, you can go with its paid plans, which start from $10 to $100 per month.

- Translation API by SYSTRAN: The API by SYSTRAN allows you to access the advanced language technologies to translate the given text from the source language to the target language, and it supports more than 130 languages. It also provides an efficient way to translate one language to another. It gives other NLP APIs, which can be used for language detection, tokenization, text segmentation, named entity recognition, andmoredifferent tasks.

Furthermore, you can integrate this API into your website or mobile application and can also add other NLP capabilities to your application. Basically, it is available for free, but for extensive commercial uses, you have to contact SYSTRAN for exclusive pricing.

- Text Analysis API by AYLIEN: It is a collection of APIs that has the ability to use various NLP and machine learning technologies. The API enables you to fetch the meaning from the textual content. It is also used to extract the main text from a document, classify text into more than 500 categories, summarize an article, derive named entities, detect language, and execute opinion analysis.

Furthermore, it suggests hashtags for representing the best document. You can use it for free but can make only1000 hits in a day. For using more features of the API, you have to go with its paid tiers, which starts from $199 to $1399 in a month.

- NLP by Linguakit: It is introduced by Linguakit. It provides you the ability to analyze and extract useful insights from texts. It is used for language detection, keyword extraction, multiword extraction, syntactic analysis, and verb conjugation.

It does not cost anything for the first 1000 requests every month. After that, to continue with it, the cost ranges from $19 to $119 in a month.

NLP vs Machine Learning:

NLP: NLP is an area of computer science, which enables the computer system to understand human language as humans speak and write. The human speaks unstructured data like misspelled words, abbreviations, slang. These fluctuations make it difficult for the computer to understand human language. NLP involves various factors for understanding natural language.

- Semantics: It is the subfield of linguistics that deals with the meaning of words.

- Syntax: It is important for text structure to know what does it mean.

- Context: It is useful for understanding that if a sentence is positive or negative.

Machine learning: Machine learning is part of Artificial Intelligence. It is the combination of statistical techniques that are used to detect patterns, including sentiment, part of speech, entities, text analysis, and other occurrences within a text. ML is a set of algorithms that allows you to make better NLP, better robotics, better vision, etc. It also provides a way to solve real Artificial Intelligence problems. Today it is used in self-driving cars, price prediction, fraud detection, and even for NLP. There are two types of machine learning procedures:

- Supervised machine learning: In this procedure, the machine is trained using well-categorized data that helps to provide the correct output. It learns from labeled data and also helps to predict outcomes for unpredicted data.

- Unsupervised machine learning:In this procedure, the machine is trained using neither classified nor labeled data. It allows the algorithm to act on that data without any guidance. The task of the machine is to arrange unsorted information according to patterns, similarities, and differences without having prior training of data.

Audience

Our NLP tutorial is helpful for beginners who are starting projects on NLP.

Prerequisite

Before learning NLP, you must have knowledge about Artificial Intelligence and Python also if you are starting the project on NLP with Python.

Problem

We assure that you will not find any problem in this NLP tutorial. But if there is any mistake or error, please post the error in the contact form.