TensorFlow Tutorial for Beginners

Introduction to TensorFlow

The TensorFlow is an open source library in machine learning which is used for the dataflow. The TensorFlow was introduced by Google’s developers and engineers working with the Google brain team. As we know, Google has an extensive database, by which they provide the best satisfaction to its users. Google uses Machine learning in various ways, like Google translator, Google photos, etc.

The machine learning is used almost in every product of Google. The TensorFlow is designed for the high-scale circulated training and assumption. It is very flexible as it allows you to experiment with the models of machine learning and increases optimization at the system- level.

The sub-graphs are pruning from the graphs in the TensorFlow by the argument Session.Run(). The division of sub-graphs run in various processes and devices. Circulate the pieces of the graph to the work services.

The worker services initiate the execution of the graph pieces in the TensorFlow. TensorFlow is also use in “in-depth neural network research," it is a representative math library and also used for machine learning applications such as neural networks.

To use the TensorFlow, we need to know about Python Programming. There are many reasons to use TensorFlow like voice/sound recognition, text-based applications, Image recognition, time series, video detection, etc.

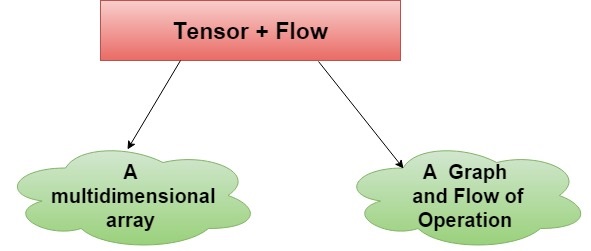

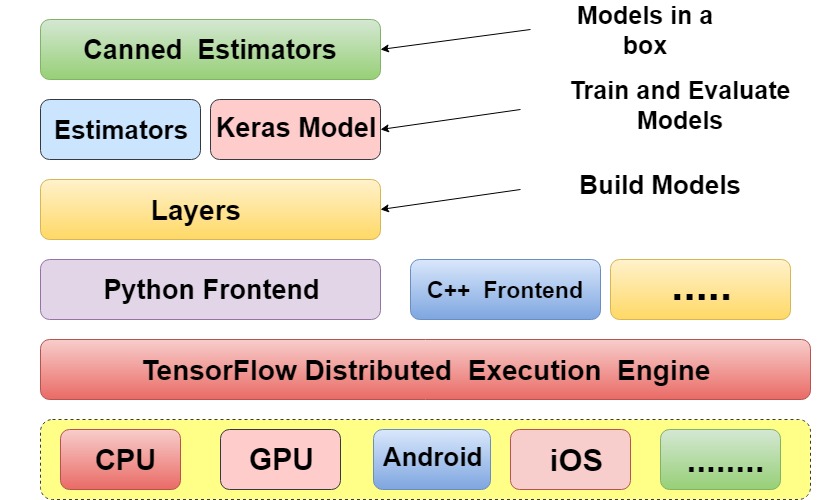

The TensorFlow enables those type of computers which identifies every single data which represents and learns patterns. The exact purpose or the meaning of the TensorFlow is shown in the figure given below:

It is using the data model graph to build models. Overall, we use the TensorFlow to achieve the terms classification, perception, understanding, discovering prediction, and creation. The sound based applications need the proper data feed and the neural networks, which are capable of understanding the audio signals.

We can implement voice recognition, voice search, sentiment analysis, flaw detection (engine noise), Invoice/sound based recognition with the help of TensorFlow. We should know these terms so that we can work on the voice sound based recognition application in TensorFlow.

The text-based application is another use of TensorFlow. Sentimental analysis(CRM, Social Media), Threat detection(social media, government) and fraud detection (Insurance, Finance) are some examples of text-based applications. Language detection is the widespread use of the text-based application, and the Google translator is also a text-based application that translates 100 languages from one to another.

The TensorFlow is also used in image recognition, in which we have to work upon face recognition, image search, motion detection, machine vision, and photo clustering, etc.TensorFlow object recognition algorithms classify and identify random objects within larger pictures.

Mostly, it is used in engineering applications to classify shapes for modeling intention (3D space development from 2D photos) and by social networks for image tagging (Facebook’s Deep Face). The TensorFlow time series algorithm is also a reason for the use of TensorFlow, which allows for-casting non-specific periods.

The recommendation is the frequent use of TensorFlow time series, we are heard about this type of application in Amazon, Google, Facebook and Netflix where these all analyze the Customers activity and compare those activities to millions of other users to find out what the customers might like to purchase or want to watch.

The other use of TensorFlow is video detection and neural networks. These types of applications are mainly used in Motion Detection, real-time thread Detection in gaming, security, airports, and UI/UX fields. Nasa is inventing a system with TensorFlow for orbit classification and object clustering of asteroids. As a result, they can classify and conclude NEOs (near earth objects).

It is using the data model graph to build models. Overall, we use the TensorFlow to achieve the terms classification, perception, understanding, discovering prediction, and creation. The sound based applications need the proper data feed and the neural networks, which are capable of understanding the audio signals.

We can implement voice recognition, voice search, sentiment analysis, flaw detection (engine noise), Invoice/sound based recognition with the help of TensorFlow. We should know these terms so that we can work on the voice sound based recognition application in TensorFlow.

The text-based application is another use of TensorFlow. Sentimental analysis(CRM, Social Media), Threat detection(social media, government) and fraud detection (Insurance, Finance) are some examples of text-based applications. Language detection is the widespread use of the text-based application, and the Google translator is also a text-based application that translates 100 languages from one to another.

The TensorFlow is also used in image recognition, in which we have to work upon face recognition, image search, motion detection, machine vision, and photo clustering, etc.TensorFlow object recognition algorithms classify and identify random objects within larger pictures.

Mostly, it is used in engineering applications to classify shapes for modeling intention (3D space development from 2D photos) and by social networks for image tagging (Facebook’s Deep Face). The TensorFlow time series algorithm is also a reason for the use of TensorFlow, which allows for-casting non-specific periods.

The recommendation is the frequent use of TensorFlow time series, we are heard about this type of application in Amazon, Google, Facebook and Netflix where these all analyze the Customers activity and compare those activities to millions of other users to find out what the customers might like to purchase or want to watch.

The other use of TensorFlow is video detection and neural networks. These types of applications are mainly used in Motion Detection, real-time thread Detection in gaming, security, airports, and UI/UX fields. Nasa is inventing a system with TensorFlow for orbit classification and object clustering of asteroids. As a result, they can classify and conclude NEOs (near earth objects).

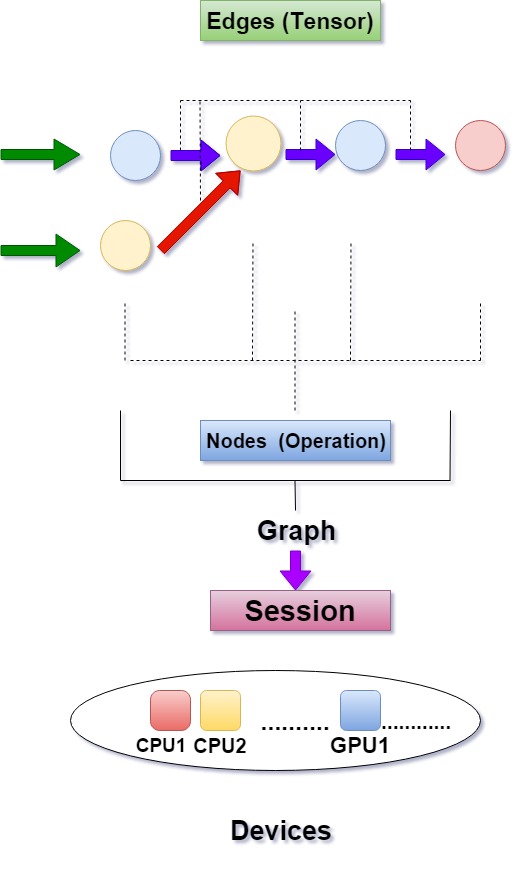

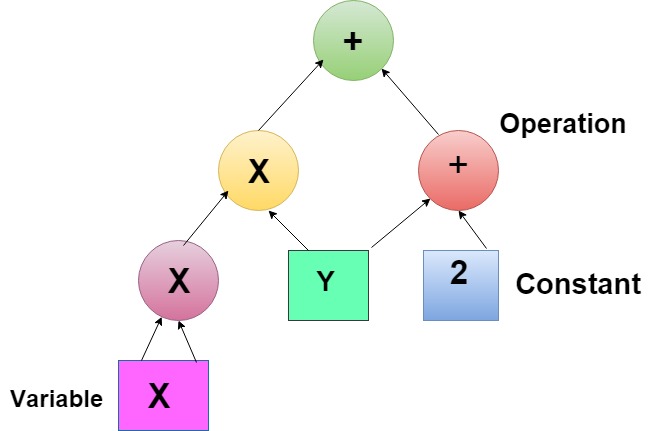

The tensors and the computational graphs are the core component in the TensorFlow, which are used for the traverse between all the nodes via edges. The Tensor is used to represent the N-dimensional datasets. There is a computational graph in the TensorFlow, which generally refers to the stream. This type of figure can never be cyclic, and every node in this graph shows an operation like multiply and divide, etc.

After the process, the results of every operation are in the form of a new Tensor. Some computational subgraphs that are involved in the TensorFlow and these sub-graphs are part of the main graph. By nature, we can say that the subgraphs are always worked like computational graphs.

The figure of the data flow graph is given below so that we can understand the flow of data in TensorFlow according to this diagram:

The tensors and the computational graphs are the core component in the TensorFlow, which are used for the traverse between all the nodes via edges. The Tensor is used to represent the N-dimensional datasets. There is a computational graph in the TensorFlow, which generally refers to the stream. This type of figure can never be cyclic, and every node in this graph shows an operation like multiply and divide, etc.

After the process, the results of every operation are in the form of a new Tensor. Some computational subgraphs that are involved in the TensorFlow and these sub-graphs are part of the main graph. By nature, we can say that the subgraphs are always worked like computational graphs.

The figure of the data flow graph is given below so that we can understand the flow of data in TensorFlow according to this diagram:

Figure: Data Flow Graph

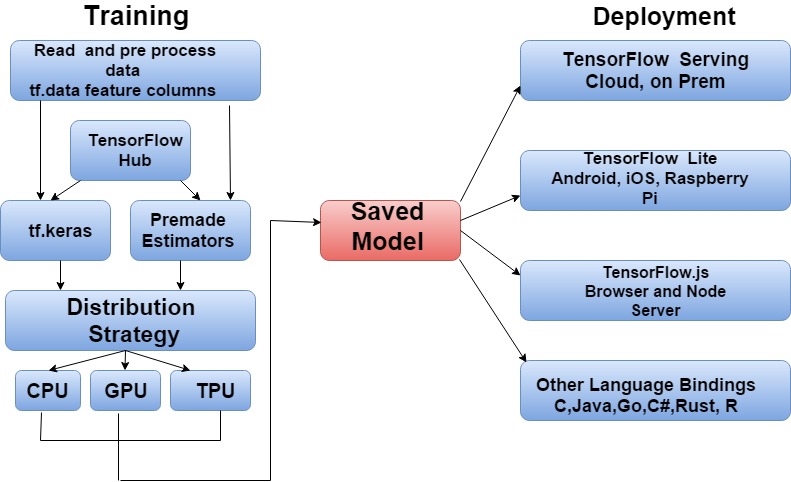

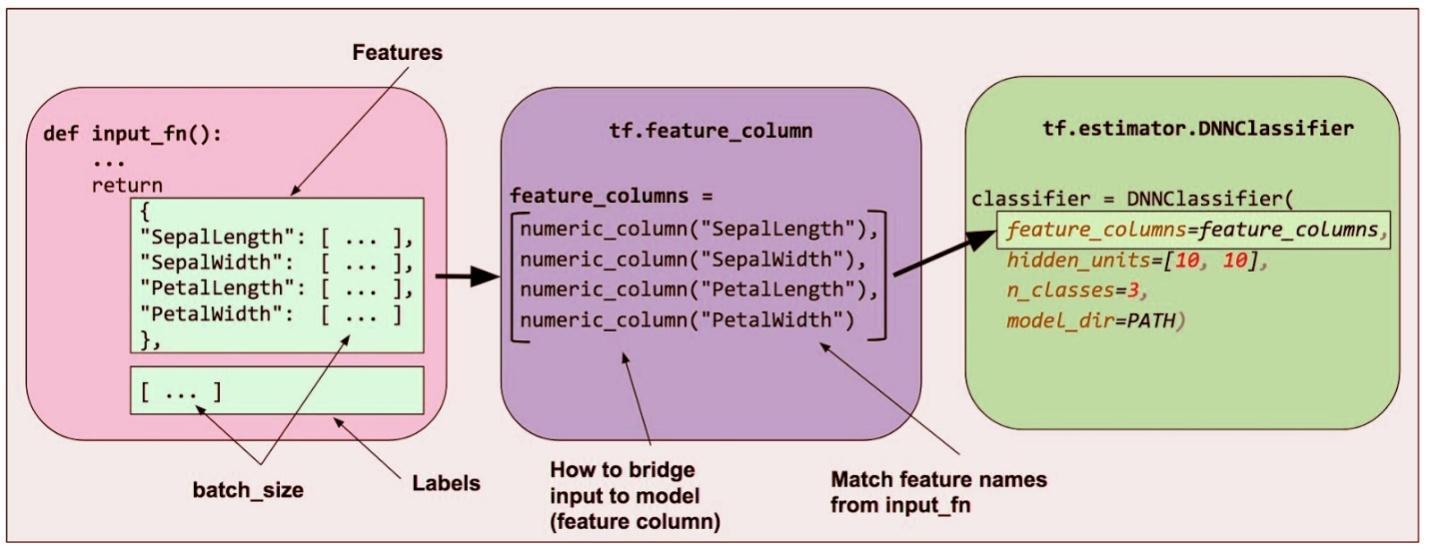

TensorFlow is used in various fields such as Javascript, IoT (Internet of things), Android, and production, etc. We can deploy a production-ready Machine Learning pipeline for training and inference using TensorFlow extended. The TensorFlow.js is the library to develop and provide training to the models in javascript and then implement in browser or Node.js. The mobile embedded devices like Android, iOS, Edge TPU, and Raspberry Pi, inventor flow lite run with inference. We can train large models on multiple machines in a production environment with the help of estimators. TensorFlowcontributes a collection of pre-made Estimators to implement standard machine learning algorithms.History of TensorFlow

The Tensor means multidimensional array, and the flow means data flow in operations. Google announced in March 2018, TensorFlow.js version1.0 for machine learning in the scripting language that is javascript, and released TensorFlow Graphics for in-depth knowledge in Computer Graphics. In the starting of 2011, Google Brain built a doubt as a closed source software machine learning system based on deep learning neural networks. TensorFlow can run on many CPUs and GPUs, and it supports 64 bit Linux, MacOS, windows and mobile computing environments like Android and iOS. A tensor processing unit (TPU), which was announced by Google in May 2016. It is a programmable AI accelerator created to support high throughput of low precision arithmetic. In May 2017, Google released the second Generation of TPU and announced the availability of TPUs in the Google Compute Engine. These type of TPUs support up to 180 teraflops, it organized into 64 TPUs clusters, and support up to 11.5 Petaflops. Google developed the third Generation of CPUs in May 2018, which is providing up to 420 teraflops and 128 GB HBM. There is Edge TPU, which is also invented by Google in July 2018.It is created to run TensorFlow lite machine learning models on short client computing devices like smartphones. Google developed a software stack mainly for mobile development in May 2017 that is known as TensorFlow lite.Why TensorFlow?

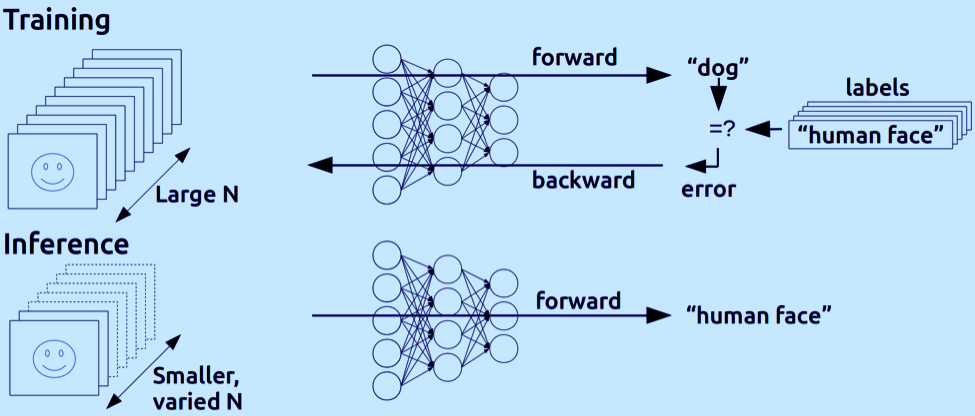

There are some algorithms used in deep learning, which are called neural networks. These are created with the biological nervous system, like the brain, which is used to process the information. It is an end-to-end platform. TensorFlow is the primary deep learning tool, and it is using data flow graphs to build models. Developers can create a sizeable neural network with many layers by the TensorFlow.Deep learning is the subset of machine learning, and we use primarily neural network in deep learning. It is the trending technology behind artificial intelligence, and here we teach them how to recognize images and voice, etc. It is a learning mechanism like traditional machine learning, and the data is more complicated and more unstructured, It can be in the form of files like images, audio, video, text files, etc. The neural network is one of the core components of deep learning and neural network. It looks like this, there is something known as an input layer, and there is an output layer also. In between the input and output layer, there is a bunch of hidden layers, so typically there would be at least one hidden layer, and anything more than one hidden layer is known as a deep neural network. Thus, any neural network with more than three layers and altogether is known as a deep neural network. There are various functions of different layers, the input could be in the form of images, pixel values of the image and it passes on to the hidden layers, and the hidden layers intern performs the computations and what they have it is a part of this trainee. They have these weighs and biases that they keep updating till the training process is completed and each neuron has multiple, and there will be one biases, and there are like variables we can see this in the TensorFlow code. So that's what the hidden layer does a bunch of computation and passes its values to the output layer, and then the output layer gives the output, it could be in the form of a class. For example- if you are doing classification, it tells us which category the image may belong to. Let's say if there is an image classification application, then the input could be a bunch of images of maybe cats and dogs. The output will be like if this will give zero and one that means it is a cat or if it provides one and zeroes that says it's a dog, so that is the kind of binary classification and that can be extended with many neurons on the output side to have many more classes. And it is also be used for regression as well not necessarily only for classification.Terms come in the TensorFlow

These all are the terms which occur in the TensorFlow. There are two main terms of tensors flow-- Training

- Deployment

- Read and preprocess data (tf.data, feature, and columns)

- TensorFlow

- Distribution strategy

- Deployment- It is also divided into four parts, which are given below-

- TensorFlow serving (cloud, on-prem)

- TensorFlow lite (Android, iOS, Raspberry Pi)

- js (Browser and node server)

- Other languages (C, Java, Go, C#, Rust, R)

What is Tensors?

TensorFlow is a symbolic math library, that is used for machine learning applications like neural networks. TensorFlow can solve the real problems and accessible to most programs due to its unique features such as the computational graph concept, automatic differentiation, and the adaptability of the TensorFlow python API structure. The TensorFlow represents tensors as n-dimensional arrays of base Datatypes. It is a generalization of vectors and matrices for potentially higher dimensions. There are elements in a tensor that have a similar data type, and that data type is always known, and it has several aspects, and the size of every part might be only partially understood.Data flow Graphs and Sessions

By using the data flow graph, we can represent your computation in terms of the dependencies between individual operations, data flow graph leads to a low-level programming model in which first you have to define data flow graph after that we can create a session to run parts of the chart across a set of local and remote devices. The higher level API’s and the Keras can hide graphs and courses from the end users, but this thing is useful to understand how, the API's are implemented. Figure: Graph and Sessions

Figure: Graph and Sessions

Features of TensorFlow

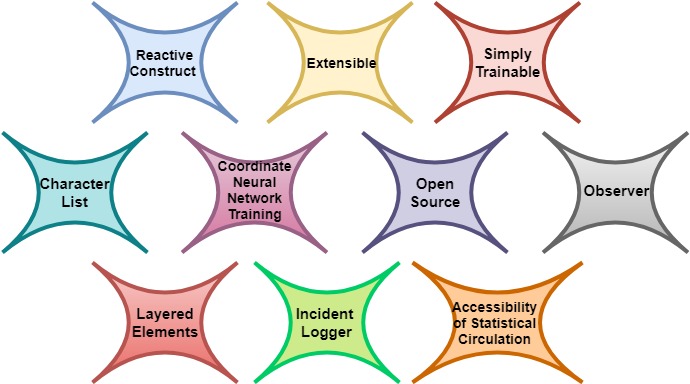

There are many features of TensorFlow by which we are having an idea of the popularity of TensorFlow, and it has an interactive multiplatform programming interface that is extensible and constant when we compare other libraries of deep learning. The TensorFlow performs out of the box when we using the high-level APIs, it has better computational graph conceptualize. The TensorFlowis used to create different backend software such as GPU, ASIC, etc. It's a robust machine learning framework, and we can use TensorFlow in deep learning also. The in-depth knowledge executes the higher level building, and deploying tasks; each framework is developed differently for a different purpose. TensorFlow also has a highly flexible system architecture. There are some features of TensorFlow which are given below we explained those in brief:

There are some features of TensorFlow which are given below we explained those in brief:

- Reactive construct

- Extensible

- Simply Trainable

- Character List

- Coordinate neural network training

- Open Source

- Observer

- Layered Elements

- Incident Logger

- Accessibility of statistical Circulation

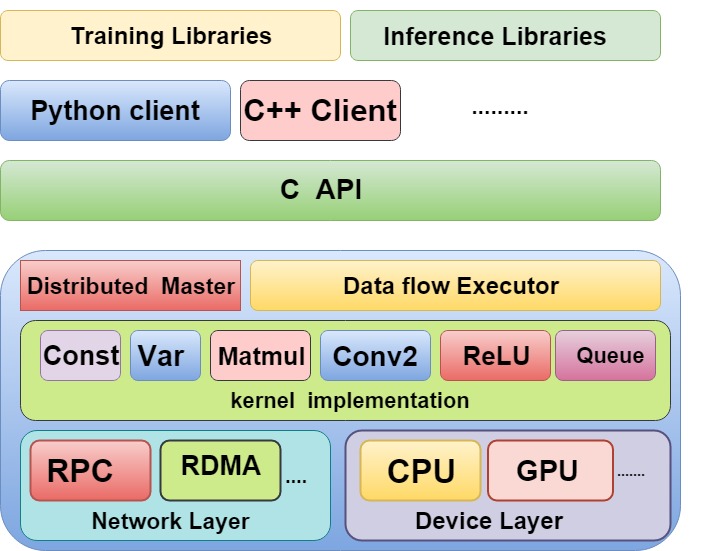

Architecture of TensorFlow

TensorFlow supports the production environment, designed various types of machine learning models that are flexible and serving high-performance systems. When we have the same server and APIs, still TensorFlow serving makes it simple to deploy and generate new algorithms, and experiments.

Figure: Architecture of TensorFlow.

TensorFlow Installations Guide

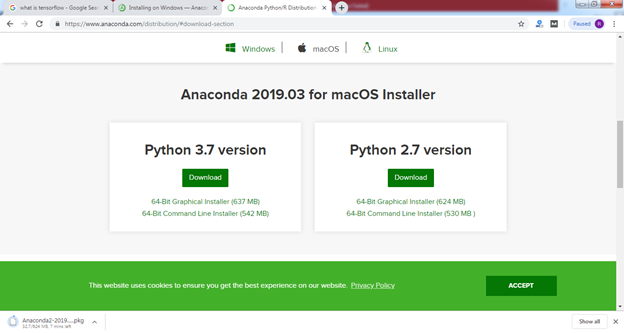

We need anaconda first to install TensorFlow after that we need to set environment variables. We have to create an environment for TensorFlow after getting the environment we should fix the TensorFlow. Before the installation, TensorFlow, tested by a 64-bit version, and Ubuntu has 16.4 or higher. Here we are showing some steps to install anaconda: Step 1 Go to the Anaconda official website www.anaconda.org, and download the anaconda set up. Step 2

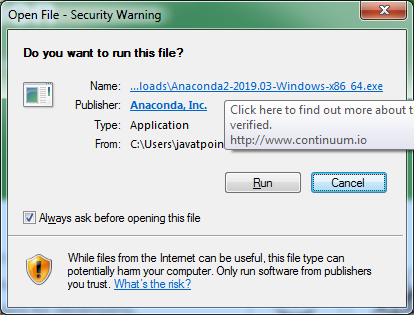

Click on the Run button to install.

Step 2

Click on the Run button to install.

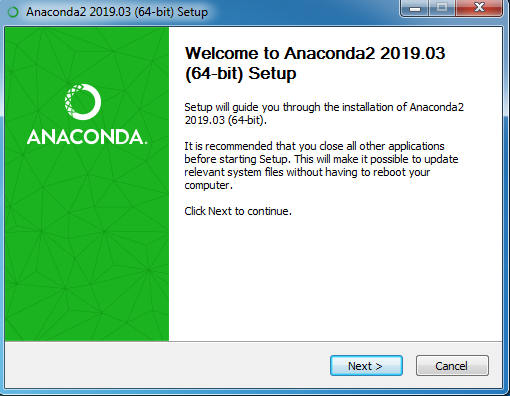

Step 3

Click on Next button to move forward

Step 3

Click on Next button to move forward

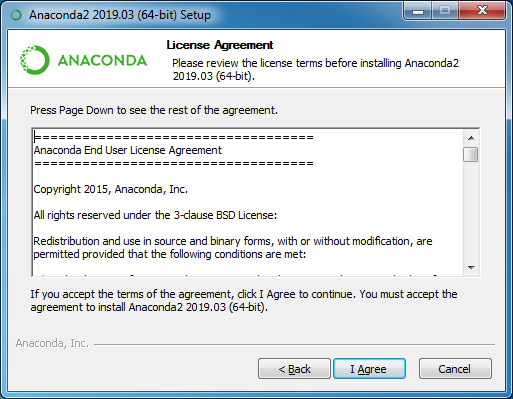

Step 4

Read terms and Conditions and click on the I Agree button.

Step 4

Read terms and Conditions and click on the I Agree button.

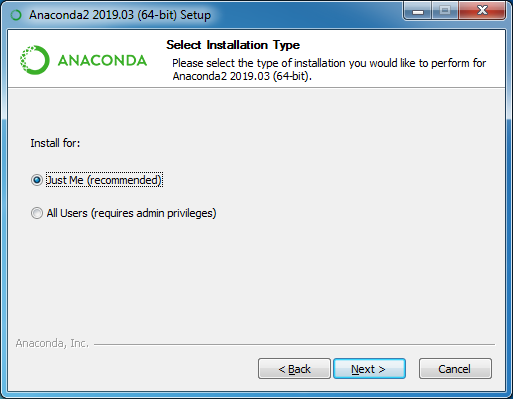

Step 5

Select the type of installation and then click on the Next button.

Step 5

Select the type of installation and then click on the Next button.

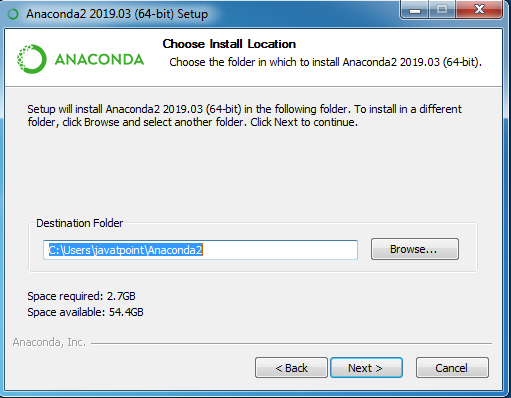

Step 6

Select the Destination folder and click on the Next button.

Step 6

Select the Destination folder and click on the Next button.

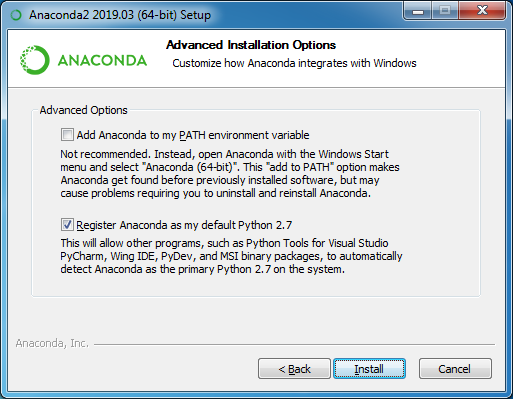

Step 7

Click on the Install button.

Step 7

Click on the Install button.

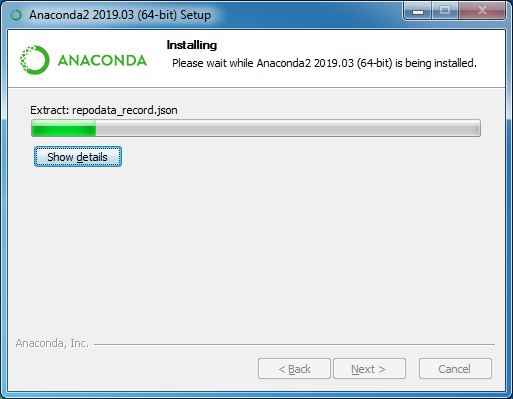

Step 8

Installation is started, Click on the button Show Details.

Step 8

Installation is started, Click on the button Show Details.

Step 9

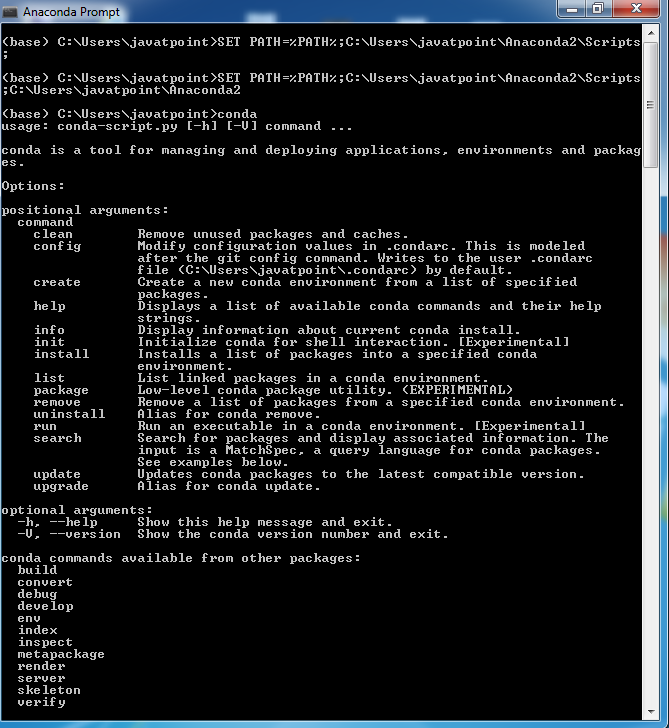

Set Environment Variables.

SET PATH=%PATH%; location of the folder where you want to install Anaconda;

Step 9

Set Environment Variables.

SET PATH=%PATH%; location of the folder where you want to install Anaconda;

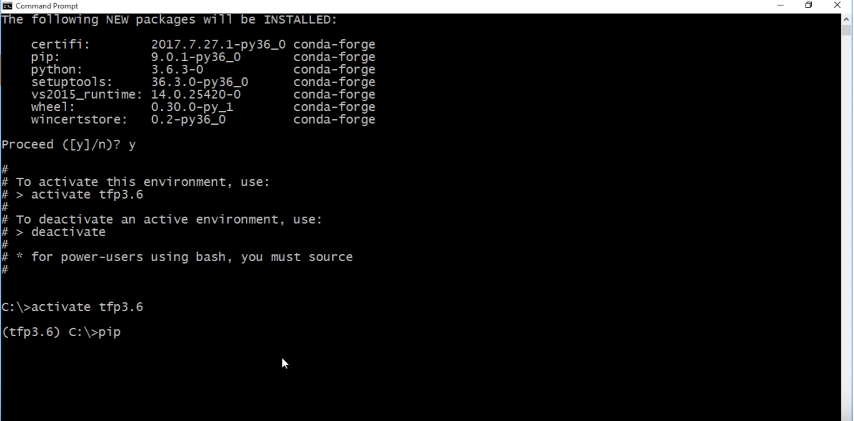

Step 10

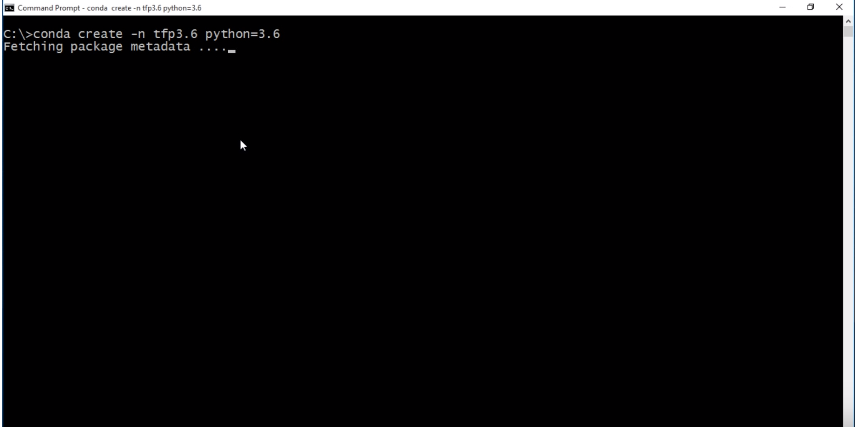

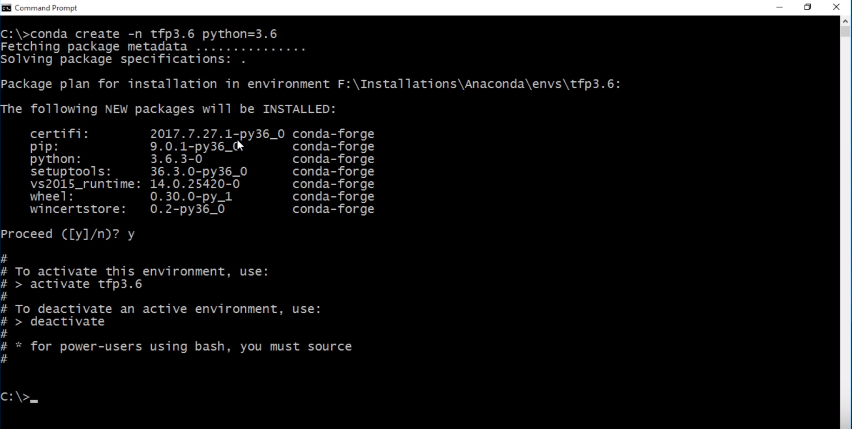

Create an environment for the TensorFlow.

Step 10

Create an environment for the TensorFlow.

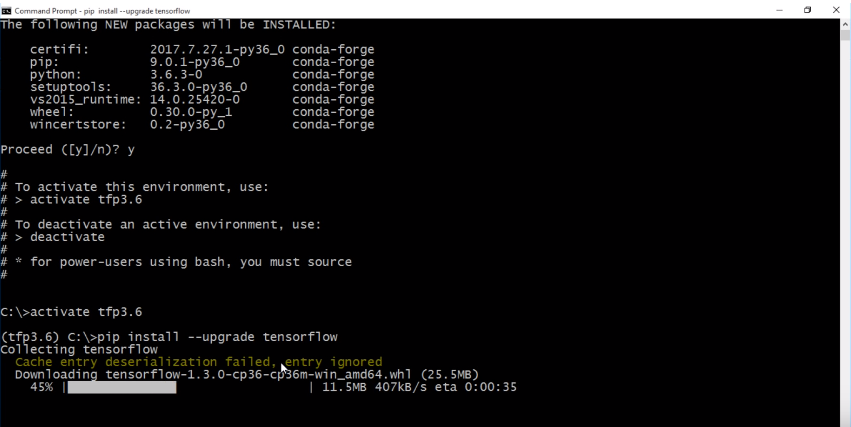

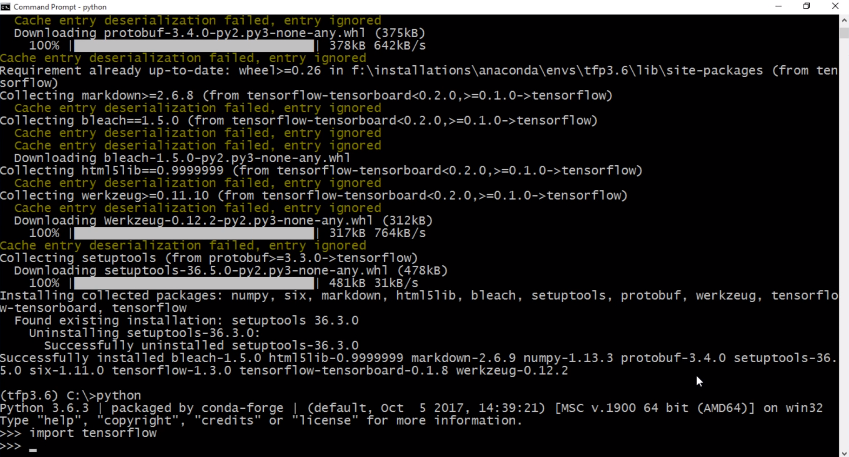

Step 11

Install TensorFlow.

Step 11

Install TensorFlow.