Forward Chaining in AI : Artificial Intelligence

Forward Chaining is the process which works on the basis of available data to make certain decisions. Forward chaining is the process of chaining data in the forward direction. In forward chaining, we start with the available data and use inference rules to extract data until the goal is not reached. Forward chaining is the concept of data and decision. From the available data, expand until a decision is not made.

In artificial intelligence, we have two different methods to use forward chaining.

- Forward Chaining in Propositional Logic

- Forward Chaining in Predicate Logic/(FOPL)

We will discuss both one by one

Forward Chaining in Propositional Logic

In propositional logic, forward chaining starts its journey from the given knowledge base. If all the premises of the implication are known, then its conclusion will be added to the set of known facts.

We use the below forward chaining algorithm to perform the forward chaining.

function PL-FC-ENTAILS?(KB, q) returns true or false inputs: KB, the knowledge base, a set of propositional definite clauses q, the query, a proposition symbol count ?a table, where count [c] is the number of symbols in c’s premise inferred ?a table,where inferred[s] is initially false for all symbols agenda ?a queue of symbols, initially symbols known to be true in KB while agenda is not empty do p?POP(agenda) if p = q then return true if inferred[p] = false then inferred[p]?true for each clause c in KB where p is in c.PREMISE do decrement count [c] if count [c] = 0 then add c.CONCLUSION to agenda return false

Conclusions drawn from the above algorithm:

- Forward Chaining is sound as every inference is necessarily an application of Modus Ponen.

- Forward Chaining is complete as all atomic sentences will be derived from it.

Let’s see an example:

- If D barks and D eats bone, then D is a dog.

- If V is cold and V is sweet, then V is ice-cream.

- If D is a dog, then D is black.

- If V is ice-cream, then it is Vanilla.

Derive forward chaining using the given known facts to prove Tomy is black.

- Tomy barks.

- Tomy eats bone.

Solution: Given Tomy barks.

From (1), it is clear:

If Tomy barks and Tomy eats bone, then Tomy is a dog.

From (3), it is clear:

If Tomy is a dog, then Tomy is black.

Hence, it is proved that Tomy is black.

Note: There is an advantage of forward chaining that is, we can draw new inference from the given facts.

Forward Chaining in Predicate Logic/ FOPL

Forward Chaining in Predicate Logic is different from forward chaining in Propositional Logic. In FOPL, forward chaining is efficiently implemented on first-order clauses. First-order clauses are the disjunction of literals of which exactly one is positive.

For example, Crow(x) ? Thrust(x)?Water(x).

Crow(x).

Thrust(x).

Therefore, a definite clause is either atomic or an implication whose predecessor is a conjunction of positive literals. As a result, it results in a single positive literal.

The forward chaining algorithm used in FOPL is:

function FOL-FC-ASK(KB,?) returns a substitution or false

inputs: KB, the knowledge base, a set of first-order definite clauses

?, the query, an atomic sentence

local variables: new, the new sentences inferred on each iteration

repeat until new is empty

new ?{}

for each rule in KB do

(p1 ? . . . ? pn ? q)?STANDARDIZE-VARIABLES(rule)

for each ? such that SUBST(?, p1 ? . . . ? pn) = SUBST(?, p_

1 ? . . . ? p_

n)

for some p_

1 , . . . , p_

n in KB

q_?SUBST(?, q)

if q_ does not unify with some sentence already in KB or new then

add q_ to new

??UNIFY(q_,?)

if ? is not fail then return ?

add new to KB

return false

Conclusions drawn:

- Forward Chaining in FOPL is sound as each inference is an application of Generalized Modus Ponen.

- It is complete as it answers each query.

Let’s see an example of Forward Chaining in FOPL

Consider the below axioms:

- Gita loves all types of clothes.

- Suits are clothes.

- Jackets are clothes.

- Anything any wear and isn’t bad is clothes.

- Sita wears skirt and is good.

- Renu wears anything Sita wears.

Apply backward chaining and prove that Gita loves Kurtis.

Solution: Convert the given axioms into FOPL as:

- x: clothes(x)?loves(Gita, x).

- Suits(x)?Clothes(x).

- Jackets(x)?Clothes(x).

- wears(x,y)? ¬bad(y)?Clothes(x)

- wears(Sita,skirt) ? ¬good(Sita)

- wears(Sita,x)?wears(Renu,x)

To prove: Gita loves Kurtis.

FOPL: loves(Gita, Kurtis).

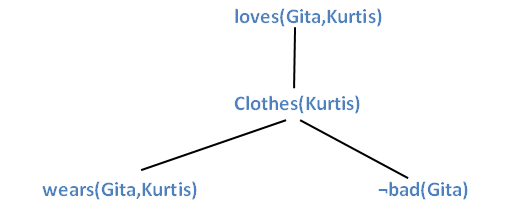

On applying forward chaining in the below graph:

Thus, it is proved that Gita loves Kurtis.

Related Posts:

- Backward Chaining

- Dynamic Routing

- Utility Functions in Artificial Intelligence

- Inference Rules in Proposition Logic

- Knowledge Based Agents in AI

- Knowledge Representation in AI

- Cryptarithmetic Problem in AI

- Constraint Satisfaction Problems in Artificial Intelligence

- Alpha-beta Pruning | Artificial Intelligence

- Adversarial Search in Artificial Intelligence

- Top 10 Artificial Intelligence Technologies in 2020.