OpenCV Tutorial

OpenCV Table of Content

- OpenCV introduction

- History of OpenCV

- Application of OpenCV

- Features of OpenCV

- OpenCV with Python

- Install OpenCV on windows

- Image processing with OpenCV

- OpenCV read image

- OpenCV write image

- OpenCV image information

- Convert RGB image into Gray scale

- Convert RGB image into HSV color space

- OpenCV image translation

- OpenCV image rotation

- How to capture an image by the camera usingOpenCV

- Face detecting with OpenCV

- Capturing video using OpenCV

- Use of FFT in OpenCV

- Edge Detection in OpenCV

- Edge detection with live camera

- Line and circle detection

- Images searching and retrieving using descriptors

- Definition of feature in the image

- OpenCV cropped image

- OpenCV image sharping

- OpenCV image Pyramid

- OpenCV blur image

- OpenCV Image transpose

- OpenCV convert RGB image into Binary image

- OpenCV Create Color Trackbar

- OpenCV Mouse click event

OpenCV Introduction

OpenCV is a common library for computer vision and image processing. It stands for open-source computer vision and it is developed by Intel. OpenCV was created to provide a familiar framework for computer vision operations and to further use of machine behavior in economic products. It primarily focuses on image processing, face recognition, video capture, analysis, and object detection.

It is a BSD-licensed product, so OpenCV makes it easy for businesses to handle and modify the code. The OpenCV library has more than 2500 advanced methods (Algorithms), which have a complete set of both simple and modern computer vision and machine learning algorithms.

OpenCV is designed to perform various tasks such as recognize and detect faces, analyze human activities in videos, identify objects, record camera movements, track moving objects, merge images to make a high-resolution image for the perfect scene. Furthermore, it also helps to find the same pictures from an image database. At present, more than 47 thousand people are using the OpenCV library, and approximately more than 18 million people have downloaded it.

What is Computer Vision?

Computer vision is a versatile scientific field that deals with how to recreate, prevent, and understand a 3D picture from its 2D image. From the viewpoint of engineering, it can automate tasks that can be done by the human visual system. Computer vision aims to understand the content of the images.

There are three essential fields that flap with the computer vision: -

- Pattern recognition: It provides various procedures to match the patterns within an image.

- Photogrammetry: It is used to take correct measurements from pictures.

- Image processing: It targets image direction.

What is image processing?

Imageprocessing is a technique in which operations can be performed over the image. In image processing, we can improve the picture quality. In this technique, the image is taken as an input and is also produced as output after processing the image. Thus, it deals with the vision of image transformation.

Supported operating system by OpenCV:

- OpenCV supports several OS such as Linux, OSx, Windows, FreeBSD, Net BSD, Open BSD, MacOS.

- It also runs on mobile operating systems such as IOS, Android, Maemo, Blackberry.

History of OpenCV

OpenCV was formally launched in 1999. Initially, the OpenCV project was researched by Intel to forward CPU-intensive applications, which included actual-time ray tracing and 3D picture walls.

- The first version of OpenCV 1.0 was released in 2006.

- The second major version of OpenCV 2 was released in October 2009.

- A nonprofit foundation OpenCV.org took the OpenCV library in august 2012.

- Intel endorsed an agreement to acquire Itseez in May 2016, a leading developer of OpenCV.

Applications of OpenCV

- It is used for object recognition.

- It is used for 2D and 3D quality toolkits.

- It is a technique to search a video or image and recovery also.

- It provides 3D architecture from motion in the movies.

- It is commonly used in driverless cars.

- It is used for mobile robotics.

- It is used in analysis and identification.

- It uses human-computer interaction.

- Furthermore, it uses for security purposes. In terms of security, OpenCV uses fingerprint, face recognition, etc.

Features of OpenCV

- OpenCV provides a facility to capture and save videos.

- OpenCV makes feature disclosure.

- With the help of OpenCV, you can read and write on the pictures.

- OpenCV helps to process images like- transformation, filter, change quality, etc.

- OpenCV is a library which provides a way to analyze the video, such as to measure the motion in the video, detect the background and identify the objects.

- OpenCV was developed in the C++ language because the C++ language follows the OOPs concepts. After that, in addition to Python, Java and MATLAB are bindings the OpenCV.

OpenCV with Python

Firstly, to better understand the OpenCV, you must have knowledge about Python. A Python is an object-oriented high-level programming language, which is very simple to learn and is developed by Guido van Rossum. Because of its simplicity and code readability, Python became more popular in a very short time. In comparison with other languages, it reduces the lines of code.

One of the major benefits of Python language is that you do not need to define the variable and also don’t need to compile the program before executing, because it is an interpreted language.

OpenCVPython is a library that is designed for Python bindings and thus to solve computer vision problems. Python offers two main advantages; first, the code is fast like C and C++ because it is actual C++ code, which works in the background of Python. The second is writing code in Python is very simple.

Python supports the NumPy and SumPy mathematical library. NumPy provides a way to make the work easier. As the NumPy is a mathematical library, so it is deeply optimized for numerical operations. It provides a MATLAB-style syntax. So, for OpenCV – Python is an applicable tool for fast solutions to computer vision problems.

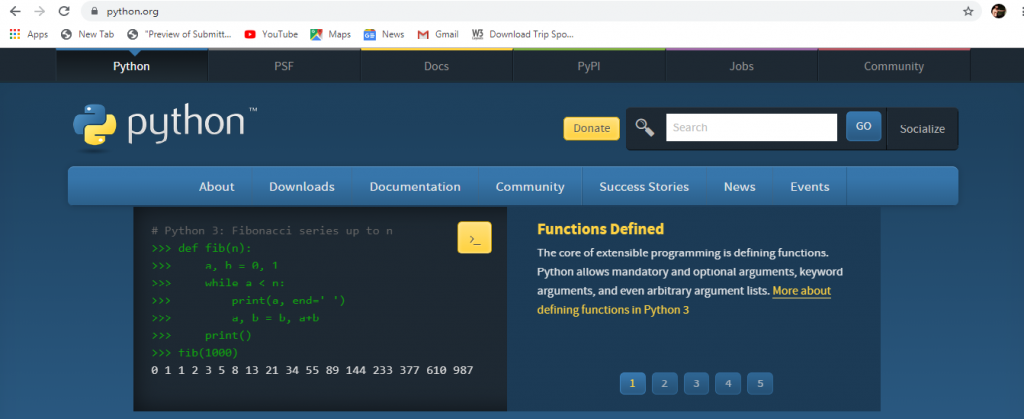

Install OpenCV on windows

Windows do not come with Python preinstalled. However, installation wizards are

available for precompiled Python, NumPy, SciPy, and OpenCV. OpenCV built system uses CMake for configuration and either Visual Studio or MinGW for compilation.

If we want it to get for intensive cameras involving Kinect, so we need to install OpenNi and SensorKinect first, which will be available as prebuilt binaries with the installation.

On Windows, OpenCV 2 offers better support for 32-bit Python as compared to 64-bit Python. Although, nowadays, most of the systems have a 64-bit version.

Some steps to install OpenCV on windows are as follows:

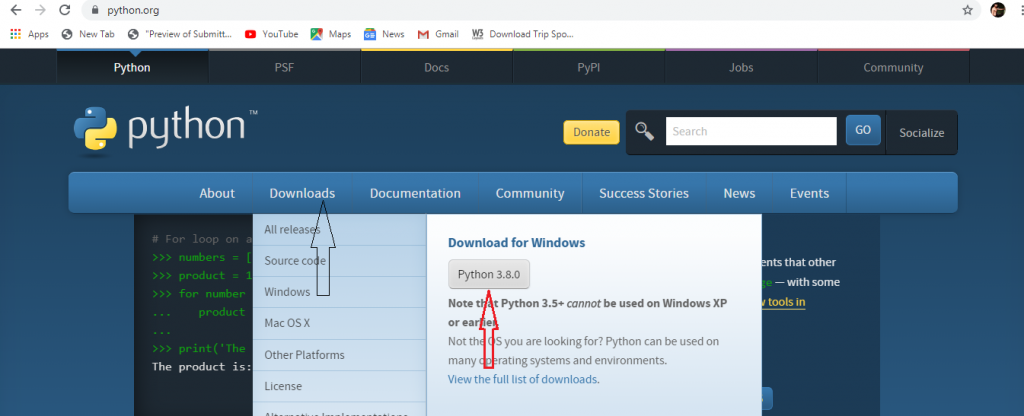

- Firstly, you have to install Python in your system, which you can download from its official website or given link – https://www.python.org/downloads/

- Now click on the download button for downloading Python source code.

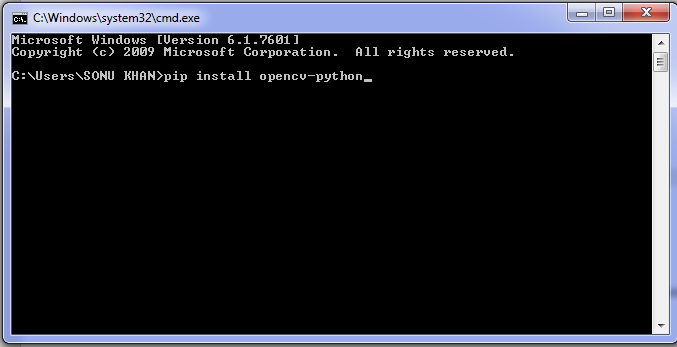

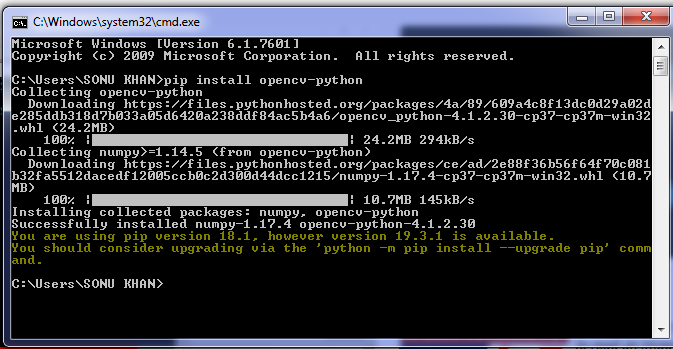

- After installing Python, Open the command prompt and write the below command. Make sure you are connected to the internet.

// pip install opencv-python

- Press the Enter button, and it will download all the configuration related to OpenCV.

Image processing with OpenCV

Whenever we work with images, we need to change them according to the situation. You can apply filters, cutting, pasting, classification of some sections, or whatever else you have in your mind can do on the image. Our tutorial will provide some ways to modify the images. Also, you will know how to detect the skin tone in the image, sharpening of an image, mark figure of subjects, and identifying interchange with the help of a line segment detector.

Conversion between various color spaces:

OpenCV has hundreds of techniques that relate to the conversion of color spaces. Generally, three-color spaces are common in computer vision: Gray, BGR, and HSV (Hue, saturation, value).

- Gray is a color space that expertly throws out the color information converting to shades of gray. This color space is very powerful for central processing, such as face detection.

- In HSV, hue is a color inflection, saturation is the sharpness of the color and value denotes darkness of color or brightness at the inverse end of the color spectrum.

- BGR is the combination of blue, green, and red in which every pixel is a three-factor array, each value represents the blue, green, and red colors; developers would be known about the similar definition of colors, besides the order of colors is RGB. In BGR color space, these (0 255 255) values have different meanings (no blue, full green, and full red).

OpenCV read image

OpenCV provides the imread( ) function to read an image. This function loads an image from the particularized file and returns it. It is very simple to read an image in OpenCV with Python code.

Syntax:

variable= cv2.imread(‘path of the file’)

Example of reading image:

importcv2

img = cv2.imread('pics.jpg')

cv2.imshow('OutputImage',img)

cv2.waitKey(0)

cv2.destroyAllwindows()

OpenCV write image

The function cv2.imwrite() is used to write an image. It means, it saves an image to a specified file.

Syntax – var = cv2.imwrite(‘path of the file’)

Example of writing image:

import cv2

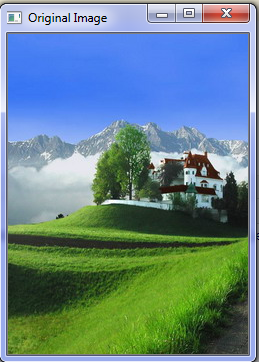

img = cv2.imread('photo.jpg')

cv2.imshow('Original Image',img)

cv2.imwrite('output.jpg',img)

cv2.imwrite('output.png',img)

cv2.waitKey(0)

cv2.destroyAllwindows()

Output

The original image will be displayed on the window, as shown above, and the output image will be saved on your image stored location. The extension of the output image will be changed according to the program. As shown in the above program, it is changed from JPG to PNG.

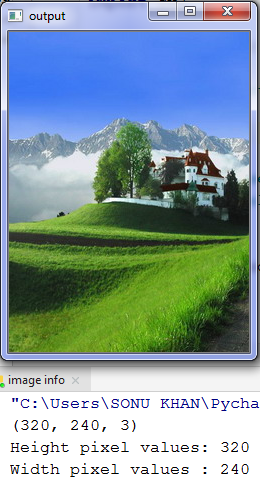

OpenCV image information

It provides the shape value of an image, like height and width pixel values.

Example of image information:

import cv2

img = cv2.imread('photo.jpg')

cv2.imshow('output',img)

print(img.shape)

print("Height pixel values : ", img.shape[0])

print("Width pixel values : ", img.shape[1])

cv2.waitKey(0)

cv2.destroyAllwindows()

Output:

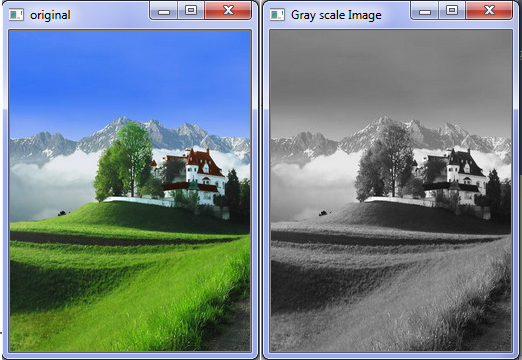

Convert RGB image into Gray scale

OpenCV provides a method cv2.cvtColor(source, destination, code ), which converts the colored image into gray.

Example of converting RGB mage into gray scale:

importcv2

img = cv2.imrea"photo.jpg”)

cv2.imshow("original",img)

cv2.waitKey(0)

gray_img = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

# for showing image

cv2.imshow("GrayscaleImage",gray_img)

# for holding image

cv2.waitKey(0)

# clear all windows

cv2.destroyAllwindows()

Output:

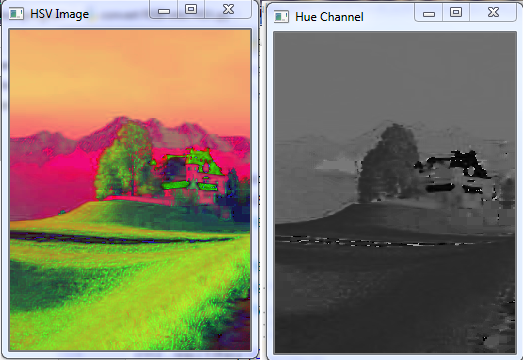

Convert RGB image into HSV color space

HSV is a better choice for giving different colors to an image. Basically, it changes the color of an image to check that you can compare two images by converting the RGB image into HSV.

Example of converting RGB image into HSV color space:

importcv2

img = cv2.imread('photo.jpg')

img_HSV = cv2.cvtColor(img, cv2.COLOR_BGR2HSV)

cv2.imshow('HSVImage',img_HSV)

cv2.imshow('HueChannel', img_HSV[:, :,0])

cv2.imshow('SaturationChannel', img_HSV[:, :,1])

cv2.imshow('ValueChannel', img_HSV[:, :,2])

cv2.waitKey(0)

cv2.destroyAllWindows()

Output:

-

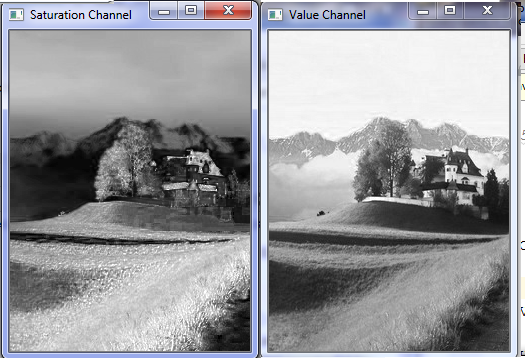

OpenCV image translation

Image translation is used to display the image within a frame. It provides a way of shifting an image. It can be done by the following code:

Example of image translation:

importcv2

importNumPy asnp

#for reading image

img = cv2.imread("photo.jpg")height, width = img.shape[:2]

print(height)

print(width)

quarter_height, quarter_width = height/3,width/3

print(quarter_height)

print(quarter_width)

n = np.float32([[1,0, quarter_width], [0,1, quarter_height]])

print(n)

# We use warpAffine function to shift

the imageimg_translation = cv2.warpAffine(img, n, (width, height))

cv2.imshow('originalimage',img)

cv2.imshow('AfterTranslation',img_translation)

cv2.waitKey(0)

cv2.destroyAllWindows()

Output:

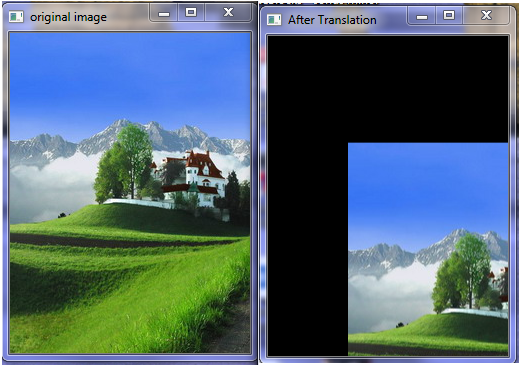

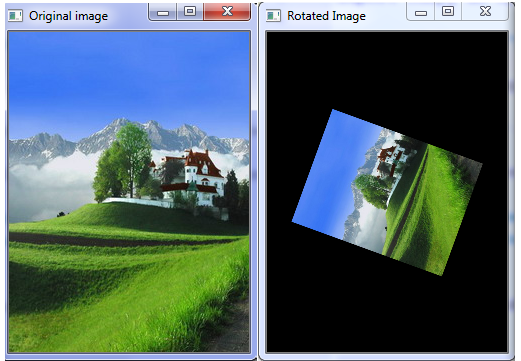

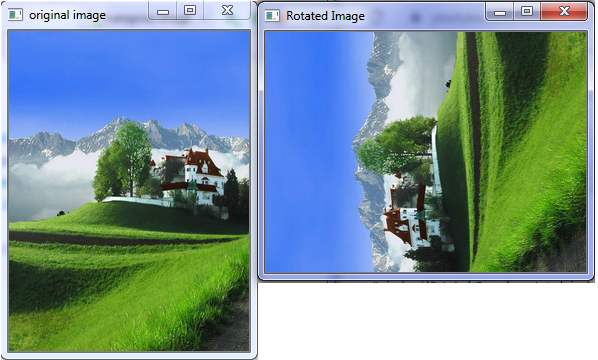

OpenCV image rotation

OpenCV provides a facility to rotate an image according to your situation.

importcv2

importNumPy asnp

img = cv2.imread("photo.jpg")

height,width = img.shape[:2]

rotation_matrix = cv2.getRotationMatrix2D((width/2,height/2), 70, .5)

rotated_image = cv2.warpAffine(img, rotation_matrix, (width,height))

cv2.imshow('Rotated Image',rotated_image)

cv2.imshow('Original image',img)

cv2.waitKey(0)

cv2.destroyAllWindows()

Output:

How to capture an image by the camera using OpenCV?

To capture an image using OpenCV, it is necessary to install matplotlib library in your system. You can install it in PyCharm. But make sure you are connected to the internet.

For installing matplotlib library follow the below steps:

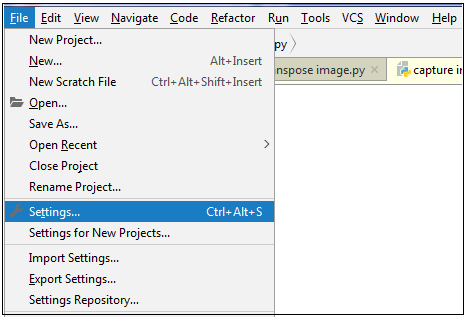

- Open PyCharm and click on file menu then go on the setting, as shown in the below picture.

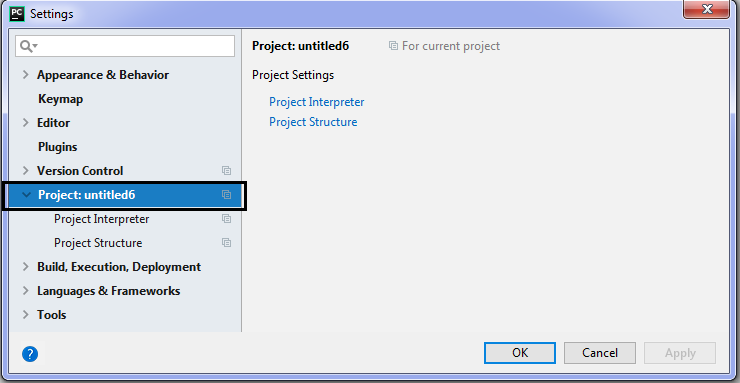

- Click on the project, which you have saved.

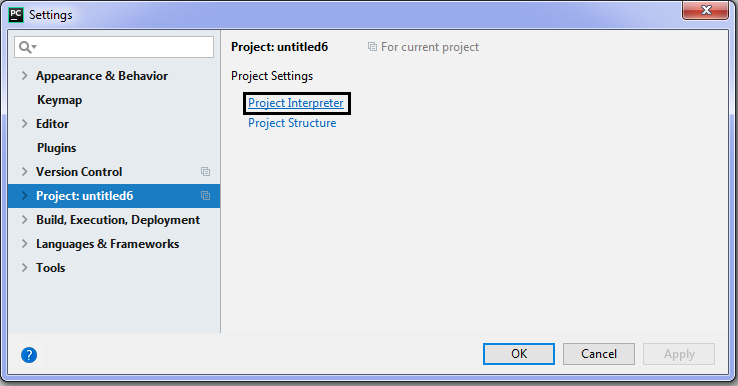

- Now, go on the project interpreter.

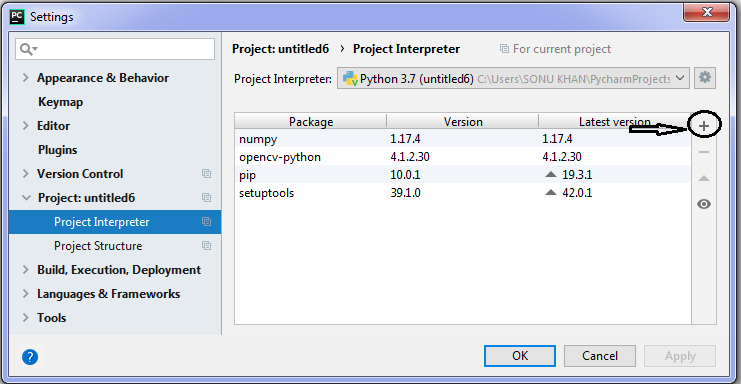

- Then click on the plus (+) sign, which is the right side on the window.

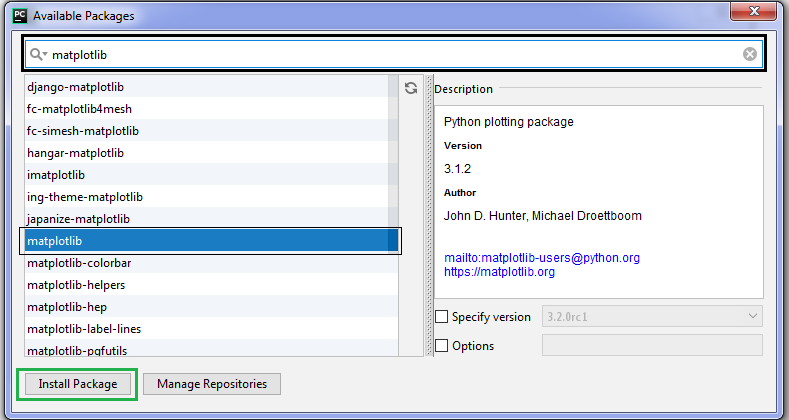

- After that, you will see a list of available packages, and now search matplotlib in the search bar then click on the install package, which is shown in the left corner.

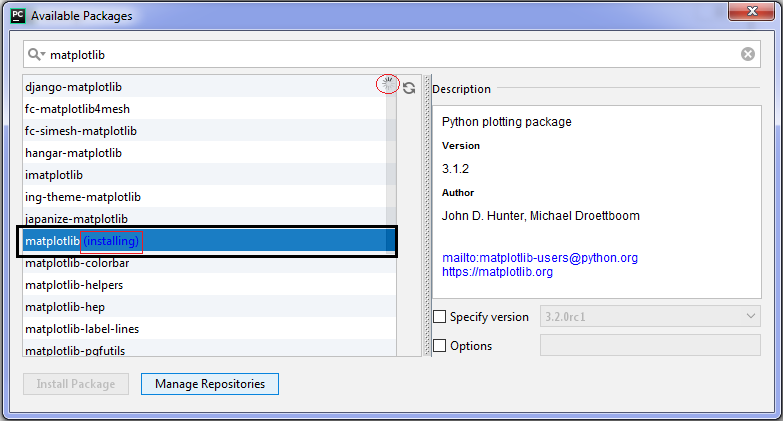

- When you click on the install package, then the installation process will be started. You can see in the below picture:

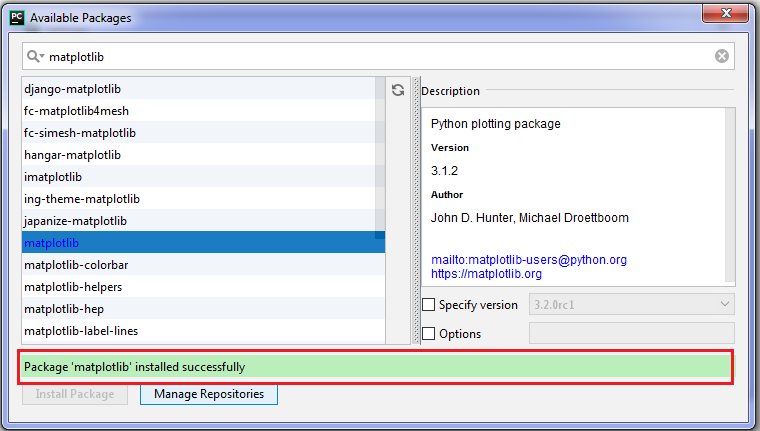

- After completing the installation process, it will be installed successfully.

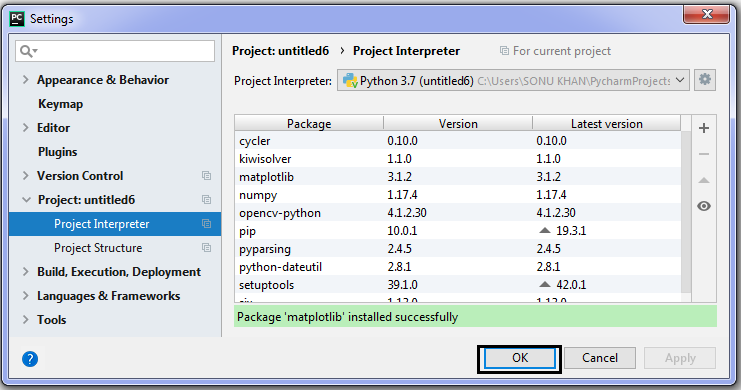

- Now, close this window and click on the ok button.

Example of capturing picture using OpenCV:

import cv2

import matplotlib.pyplot as plt

cap = cv2.VideoCapture(0)

if cap.isOpened():

ret, frame = cap.read()

else:

ret = False

img = cv2.cvtColor(frame,cv2.COLOR_BGR2RGB)

plt.imshow(img)

plt.title("camera image")

plt.xticks([])

plt.yticks([])

plt.show()

cap.release()

Output:

Face detection with OpenCV

Face detection is a computer vision technique that helps to visualize human faces in digital pictures. This technology deals with object detection. In the area of face detection, this technique has achieved a lot of attention, especially in the field of photography, security, and marketing.

OpenCV has a built-in facility to implement face reorganization, which has virtually millions of applications in all kinds of situations, from entertainment to security in the real world. OpenCV has different kinds of functionalities to detect a face, including data files that describe specific types of identifiable objects. Mainly, we will study about the HAAR cascade classifiers, which determines the difference between adjacent picture region and if the sub-image is matched or not.

HAAR cascades:

Whenever we try to identify an object, we start with some of its recognizable features to be sure about it. HAAR Cascade is a machine learning object identification algorithm, which is designed by Paul Viola and Michael Jones with the help of their paper Rapid Object Detection using a simple feature of the boosted cascade in 2001.

The HAAR cascade is a machine-learning-based path where a cascade function is prepared from many positive pictures and negative pictures. The positive pictures are those where the object identification is easily possible, and the negative images are those where image detection is not possible.

Fortunately, OpenCV provides a pre-prepared HAAR cascade function that is already trained to recognize an image. The level of closeness between two images can be classified with the help of the common gap between the image features. The deficiency of images may be defined as in the form of color coordinates.

HAAR-kind features are one type of feature that is usually used for real-time face tracking. Paul Viola and Michael Jones were first used it for this aim in the paper, Robust Real-Time Face Detection, in 2001. Every HAAR-like feature characterizes the pattern of difference among adjacent image regions.

The features of any given image may differ depending on the region’s size, which may be called window size. Two images that vary only in the scale should be able to resigned similar features for different window sizes. So, it is helpful to develop features of multiple window sizes. This type of feature is called a cascade. Before working on the concept of OpenCV, you must have the knowledge of NumPy and matplotlib. Make sure you have installed these packages- Python, matplotlib, and NumPy.

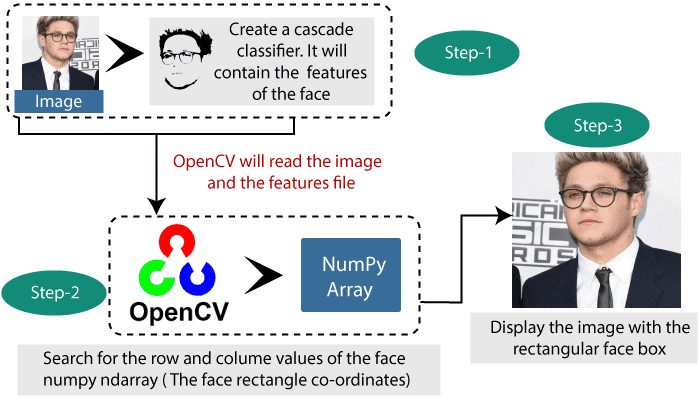

Below are the essential steps of face detecting:

- You must know about the essential installation packages, which is necessary for face detection. Because the image has to require a picture for being within it. After that, we will create a cascade classifier that will provide the characteristics of the face. The path of the XML file, which consists of face characteristics, is the restriction here.

- In this step, it is required to use of OpenCV that will read the image and the features file convert this into a black and white picture with the help of COLOR_BGR2GREY. There are NumPy arrays at the point of basic data. Only we have to search the coordinates for the image, which will be done using detect Multiscale. These coordinates are the face rectangle proportion.

- In last step, you will see a rectangular face box with the displaying image. It is the coordinates for face figure. Until the face is not found, the scale factor is used to decrease the shape value by 5%.

- You will see the picture on the window.

As shown in the below figure: -

Capturing video using OpenCV

In OpenCV, the pictures are read one-by-one and due to this, videos are produced with very fast processing in the frame.

Firstly, import the OpenCV library, which is necessary for performing operations in OpenCV. Next, we have a method which is called video Capture, and it is used to construct VideoCapture objects. This method is used to start the camera on the user’s machine. The specification of this function shows if the program should make use of default camera or an add-on camera. The ‘0’ denotes that is the built-in camera.

import cv2 video = cv2.VideoCapture(0) // create VideoCapture object and denotes to start the camera video.release() // it will release the camera in very short time.

And the last use of release method is to free the camera in a few milliseconds. When you go on and start typing, and try to execute the above code then you will see the camera light turn on for a very short time and switches off later. It happens because there is no time delay to keep the camera function. You have to import the time module and add the function time.sleep (parameter) as shown in the below code:

import cv2, time import the time module video = cv2.VideoCapture(0) time.sleep(4) It will stop the script for 4 seconds. Video.release()

As in the above code; we added a new line time.sleep(4) – this will stop the script for 4 seconds. The parameter we passed in time.sleep() line, is time in seconds. Such, when the code will execute, then the camera will be switched on for 4 seconds.

Now adding the window to display the video output with the help of this code:

import cv2, time

video = cv2. VideoCapture(0)

check, frame = video.read()

print(check)

print(frame)

time.sleep (2)

video.release()Here, we have defined a NumPy array which is used to show the first image that the video captures. This is stored in the construct array.

Use of FFT in OpenCV

FFT stands for Fast Fourier transformation. It is a mathematical concept, which plays an important role in much of processing you apply to images and videos in OpenCV. Joseph Fourier was an 18th-century French mathematician who discovered and popularized many mathematical concepts and completed his work by studying the laws governing heat.

This concept is especially useful for manipulating images because it allows us to identify regions in images where a single pixel changes a lot of functionality and regions where the changes are less affected. We can handle them accordingly. These are the frequencies that make up the original image, and we have the power to make them independent.

In OpenCV, there are various algorithms that provide a way to process images and make sense of the data involved in them. These are also reimplemented in NumPy to make our life even more comfortable. NumPy has a Fast Fourier Transform (FFT) package, which has the fft2() method. This method provides a solution for us to calculate a Discrete Fourier Transform (DFT) of the image.

The concept of Fast Fourier Transformation is based on several algorithms that are used for standard image processing operations in OpenCV, such as margin detection or line and shape detection. Let’s check out the magnitude spectrum concept of an image using Fourier Transformation, which gives a representation of the original image in terms of its changes. It takes an image and drag all the brightest pixels to the center. Then, makes a way easily for the border where all cloudy pixels have been moved. Finally, you will be able to examine how many light and dark pixels are embraced in your image.

Before examining these concepts, let’s take a look at two other ideas that tie-up with the Fourier Transformation:

- High pass filters

- Low pass filters

- High Pass Filters: -

A high-pass filter can be used to make an image sharper. It examines a region of an image and increases the intensity of positive pixels, based upon the difference in the intensity with the surrounding pixels. These filters point out exact details in the picture – exactly the opposite of the low-pass filter. It just uses a different loop kernel. If there is no change in intensity, so nothing will be affected. But if one pixel is brighter in the comparison of its just neighbors, then it immediately gets boosted.

For example:

Ex-1.

| 0 | -0.30 | 0 |

| -0.30 | 1 | -0.30 |

| 0 | -0.30 | 0 |

Ex-2.

| 0 | -1/2 | 0 |

| -1/2 | 2 | -1/2 |

| 0 | -1/2 | 0 |

A kernel is a set of gravity that is applied to a region in a source of image for generating a single pixel in the target image. For example, a ksize of 5 implies that 25 (5 x 5) source pixels are considered in generating each destination pixel. After calculating the sum of differences of the intensities of the central pixel in the comparison of all neighbors, the intensity of the central pixel will be boosted, and if the high level of changes is found so central pixel will not be increased. High pass filter is specifically effective in edge detection; that’s why it is called a high pass filter (HPF).

Low Pass Filter: -

A low pass filter is just opposite of the high pass filter. As HPF increases the intensity of a pixel when it found the difference with its neighbor's pixels. In low pass filter, the pixel will be compressed if it found the difference with the surrounding pixels is smaller than a particular edge. This is used for denoising and darken the pixels. One of the most popular dim filters, that the Gaussian blur, is a low pass filter that constricts the intensity of high-frequency signals.

Edge Detection in OpenCV

Edge detection is very important for both human and computer vision. It is used to detect the shape of an image. Humans have the ability to identify many object types and their positions very quickly just by looking at a real picture or any rough chart. Certainly, when art gives emphasis on edges and poses, it generally seems to convey the idea of an archetype like Rodin’s, The Thinker of Joe Shuster’s Superman. Similarly, the software can also reason about poses, edges, and archetype.

OpenCV provides different kinds of filters for edge finding such are – Laplacian (), Sobel(), and Scharr(). These filters are used to change the color of non-edge regions into black and edge regions into white or saturated colors. Although they are prone to misidentifying noise as edges, this can be reduced by diming an image before trying to find its edges.

OpenCV also provides many blurring filters, including blur() (simple average), medianBlur(), and GaussianBlur(). The way for edge-finding and blurring filters is different but ksize always includes an odd whole number that represents the width and height (in pixels) of a filter’s kernel.

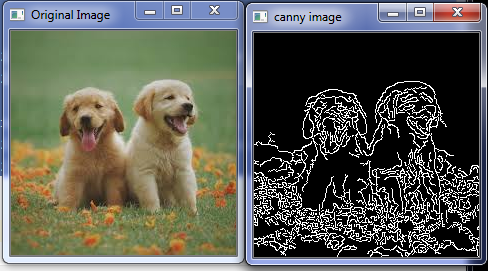

Example of edge detection:

We will use the canny() function for edge detection.

import cv2

importNumPy asnp

img = cv2.imread("download.jpg",)

cv2.imshow('Original Image',img)

cv2.waitKey(0)

canny = cv2.Canny(img, 20, 170)

cv2.imshow('canny image',canny)

cv2.waitKey(0)

Output: -

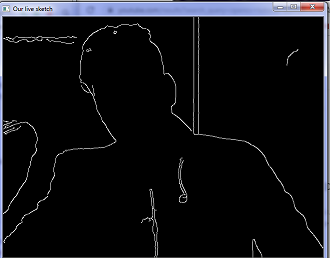

Edge detection with live camera

OpenCV provides a method for edge detection with live camera.

Example of live edge detection:

import cv2

def sketch(image):

img_gray = cv2.cvtColor(image,

cv2.COLOR_BGR2GRAY)

img_gray_blur =

cv2.GaussianBlur(img_gray,(5,5),0)

canny_edge =

cv2.Canny(img_gray_blur,10,70)

ret, mask =

cv2.threshold(canny_edge,70,255,cv2.THRESH_BINARY)

return mask

cap = cv2.VideoCapture(0)

while True:

ret, frame = cap.read()

cv2.imshow('Our live sketch',sketch(frame))

if cv2.waitKey(1) == 13:

break

cap.release()

cv2.destroyAllWindows()

Output:

Line and circle detection

Detecting edge is not only a common and important task, but it also establishes support for other complex operations. Lines and shape detection go with edge and contour detection, so let’s check how OpenCV implements these.

The technique behind line and shape detection is Hough transform, which was created by Richard Duda and Peter Hart, who enhanced the work of Paul Hough, which was done in 1960.

Line Detection: -

Firstly, we detect some lines, which is done with the help of HoughLines() and HoughLinesP() functions. These two functions have the only differences that one uses the standard Hough transform, and the second uses the probabilistic Hough transform, that’s why it has P in the name. The probabilistic Hough transform is so-called probabilistic because it only analyzes a subset of points and evaluation the probability of these points which all are belonging to the same line. This process is an upgraded version of the standard Hough transform, in this case, it’s execution is faster and computationally less intensive.

Circle detection: -

OpenCv also has a function for recognizing circles, that is called HoughCircles. It is very close to HoughLines function but where maxLineGap and minLineLength functions were restricted to dump lines, HoughCircles has a minimum distance between circles centers, minimum and maximum radius of the circles.

Images searching and retrieving using descriptors

As human being has eyes and brain, OpenCV also has the capability to identify the main features of an image and extract these into image descriptions. We can use these features as a database, which helps in image-based searches. Furthermore, we can also use key points to join images together and build a bigger image.

Some feature detecting algorithms:

- SURF: The SURF algorithm provides a way to identify blobs.

- Harris: It is beneficial for recognizing corners.

- SIFT: It is powerful to detect blobs.

- FAST: The FAST algorithm is also used for detecting corner.

- BRIEF: It helps to identify blobs.

Definition of feature in image

A feature is a field of interest in the image that’s uniquely identifiable. Just like you can imagine the corners and high-frequency fields are good features in the image, as long as the patterns that repeat themselves a lot they considered as a low-frequency feature. Corners come under the good features because they refer to divide two regions of an image. A blob is also a good feature of an image, blob means an area of an image which highly differs from its neighboring areas. Mostly features identification algorithms rotate around the corners, edges, ridge, and blob of an image. It is an important to know what is your input image by which you can apply the best tool in your OpenCV.

OpenCV cropped image

If you want to crop your image, you can easily crop your image in OpenCV using this below code-

Example of image cropping:

import cv2

import NumPy as pd

img = cv2.imread("photo.jpg")

height,width = img.shape[:2]

start_row, start_col = int(height*.15),int(width*.15)

end_row, end_col = int(height*.65),int(width*.65)

cropped = img[start_row:end_row, start_col:end_col]

cv2.imshow('original image',img)

cv2.waitKey(0)

cv2.imshow('cropped image',cropped)

cv2.waitKey(0)

cv2.destroyAllWindows()

Output:

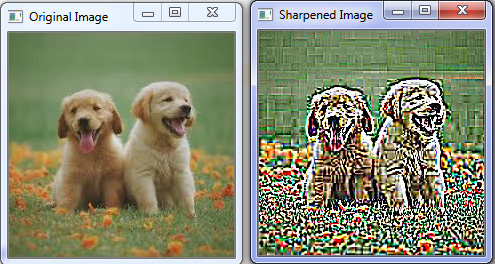

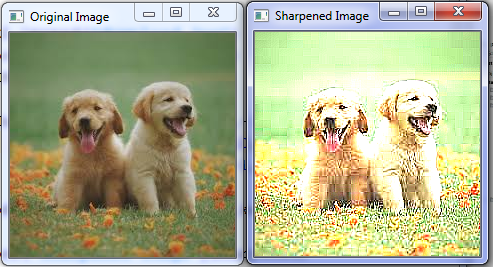

OpenCV image sharping

The sharp image looks like a blur image, but it is opposite to the blur image. Generally, it depends on kernel values. As shown in the below example.

Example of image sharping:

import cv2

import numpy as np

img = cv2.imread("download.jpg")

cv2.imshow('Original Image',img)

cv2.waitKey(0)

kernel_sharping = np.array([[-1,-1,-1,-1,-1],

[-1,-1,-1,-1,-1],

[-1,-1,25,-1,-1]

[-1,-1,-1,-1,-1],

[-1,-1,-1,-1,-1]])

sharpened = cv2.filter2D(img,-1,kernel_sharping)

cv2.imshow('Sharpened Image',sharpened)

cv2.waitKey(0)

cv2.destroyAllWindows()

Output:

- If we reduce the kernel matrix values so the output will be different, so you can adjust the kernel matrix values according to your requirement.

import cv2

import numpy as np

img = cv2.imread("download.jpg")

cv2.imshow('Original Image',img)

cv2.waitKey(0)

kernel_sharping = np.array([[-1,-1,-1],

[-1,10,-1],

[-1,-1,-1]])

sharpened = cv2.filter2D(img,-1,kernel_sharping)

cv2.imshow('Sharpened Image',sharpened)

cv2.waitKey(0)

cv2.destroyAllWindows()

Output:

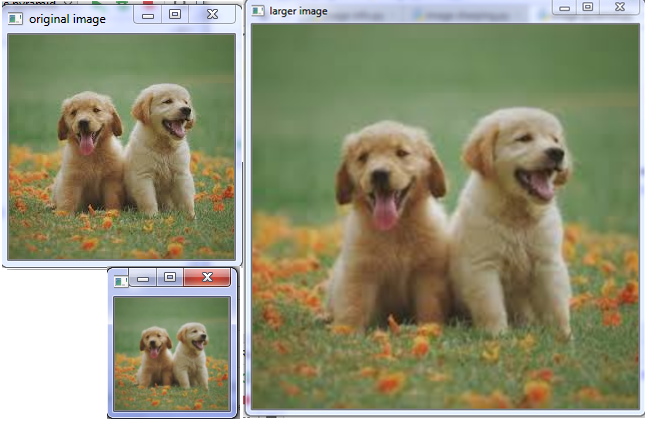

OpenCV image Pyramid

Image pyramid is a simple technique of resizing an image, in which image can be resized directly half or double from its original size by using pyrDown() and pyrUp() functions.

Example of image pyramid:

import cv2

import numpy as pd

img = cv2.imread("download.jpg")

smaller = cv2.pyrDown(img)

larger = cv2.pyrUp(img)

cv2.imshow('original image',img)

cv2.imshow('smaller image',smaller)

cv2.imshow('larger image',larger)

cv2.waitKey(0)

cv2.destroyAllWindows()

Output:

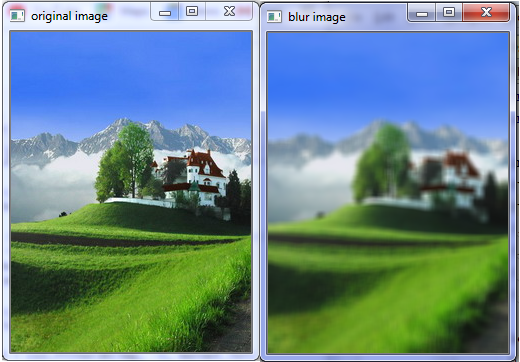

OpenCV blur image

OpenCV has a method to blur an image.

Example of blur image

import cv2

import numpy as np

img =cv2.imread("photo.jpg")

cv2.imshow('original image',img)

cv2.waitKey(0)

kernel = np.ones((7,7),np.float32)/49

blurred = cv2.filter2D(img,-1,kernel)

cv2.imshow('blur image',blurred)

cv2.waitKey(0)

cv2.destroyAllWindows()

Output

OpenCV image transpose

Image transpose is a feature of OpenCV, which is used to rotate an image directly at 900 degree without losing any information of an image, by using cv2.transpose() function.

Example of image transpose:

import cv2

import numpy as np

img = cv2.imread('photo.jpg')

rotated_image = cv2.transpose(img)

cv2.imshow('Rotated Image',rotated_image)

cv2.imshow('original image',img)

cv2.waitKey(0)

cv2.destroyAllWindows()

Output:

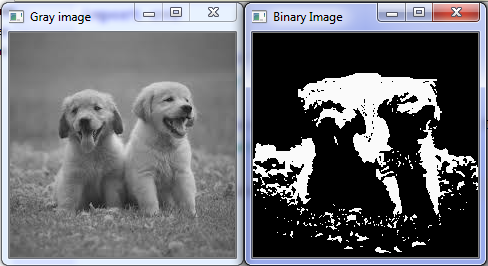

OpenCV converts RGB image into Binary image

Binary image is different from the gray color image. In binary image, all pixels are in the form of 0 and 1. The zero (0) represents a black color and one (1) represents white color in picture.

Example of RGB image into binary image:

import cv2

img = cv2.imread("download.jpg",0)

cv2.imshow("Gray image",img)

cv2.waitKey(0)

ret, bw = cv2.threshold(img,137,250,cv2.THRESH_BINARY)

cv2.imshow("Binary Image",bw)

cv2.waitKey(0)

cv2.destroyAllWindows()

Output:

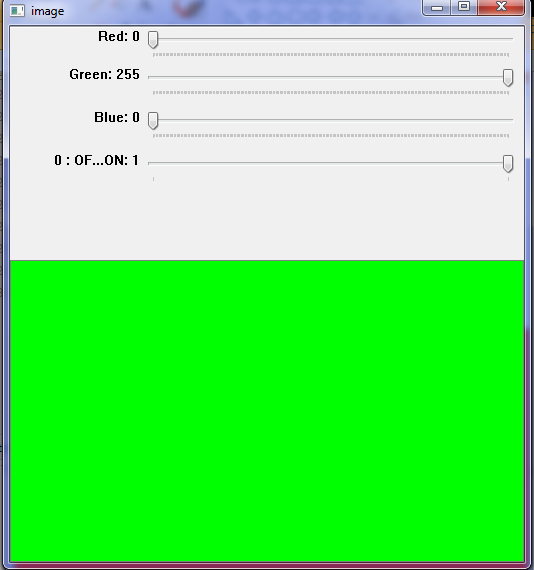

OpenCV Create Color Trackbar

Trackbar is a GUI component, through which you can vary values within number of ranges.

Example of color Trackbar:

import cv2

import numpy as np

def nothing(x):

pass

img = np.zeros((300,512,3),np.uint8)

cv2.namedWindow('image')

# color components

cv2.createTrackbar('Red','image',0,255,nothing)

cv2.createTrackbar('Green','image',0,255,nothing)

cv2.createTrackbar('Blue','image',0,255,nothing)

switch = '0 : OFF \n 1 : ON'

cv2.createTrackbar(switch,'image',0,1,nothing)

while True:

cv2.imshow('image',img)

if cv2.waitKey(1) == 13:

break

r = cv2.getTrackbarPos('Red','image')

g = cv2.getTrackbarPos('Green', 'image')

b = cv2.getTrackbarPos('Blue', 'image')

s = cv2.getTrackbarPos(switch,'image')

if s == 0:

img[:] = 0

else:

img[:] = [b,g,r]

cv2.destroyAllWindows()

Output:

OpenCV Mouse click event

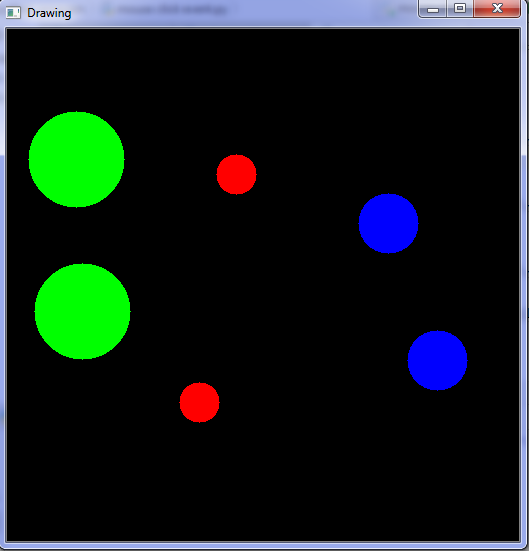

When you click the mouse button, it generates an event. Such as if you click left double button so it generates green color, click single middle, generates red color, and single right click generates blue color. As shown in the output:

For example:

import cv2 import numpy as np windowName = 'Drawing' img = np.zeros((512,512,3),np.uint8) cv2.namedWindow(windowName) # mouse callback function def draw_circle(event,x,y,flags,param): if event == cv2.EVENT_LBUTTONDBLCLK: cv2.circle(img,(x,y),48,(0,255,0),-1) if event == cv2.EVENT_MBUTTONDOWN: cv2.circle(img,(x,y),20,(0,0,255),-1) if event == cv2.EVENT_RBUTTONDOWN: cv2.circle(img,(x,y),30,(255,0,0),-1) cv2.setMouseCallback(windowName,draw_circle) while True: cv2.imshow(windowName,img) if cv2.waitKey(1) == 13: break cv2.destroyAllWindows()

Output:

Prerequisite

To learn OpenCV with Python, you should have the basic knowledge of Python.

Audience

Our OpenCV tutorial is designed to help beginners and professionals.

Problem

We assure you that you will not find any issue with this OpenCV tutorial. But if there is any mistake or error, please post the error in the contact form.