Kaggle Machine Learning Project

What is Kaggle?

Data scientists and machine learning enthusiasts connect online at Kaggle. Users of Kaggle can work together, access and share datasets, use notebooks with GPU integration, and compete with other data scientists to solve data science problems.

With the help of its potent tools and resources, this online platform was introduced in 2010 by Anthony Goldbloom and Jeremy Howard and was bought by Google in 2017 whose aim is to assist professionals and students in achieving their objectives in the field of data science.

In 2021, over 8 million users were successfully registered on Kaggle. You can use a maximum of 30 hours of GPU and 20 hours of TPU each week on Kaggle's strong cloud resources.

On Kaggle, you can upload as well as download your own datasets. You may also look at other people's datasets and notebooks and start conversations with them. On this platform, everything of your action is graded, and as you assist others and impart helpful knowledge, which helps in upgrading your score. You will be added to a live leaderboard of 8 million Kaggle members as soon as you begin earning points.

Kaggle provide machine learning projects.

Here we have selected 16 beginner projects for any machine learning enthusiast.

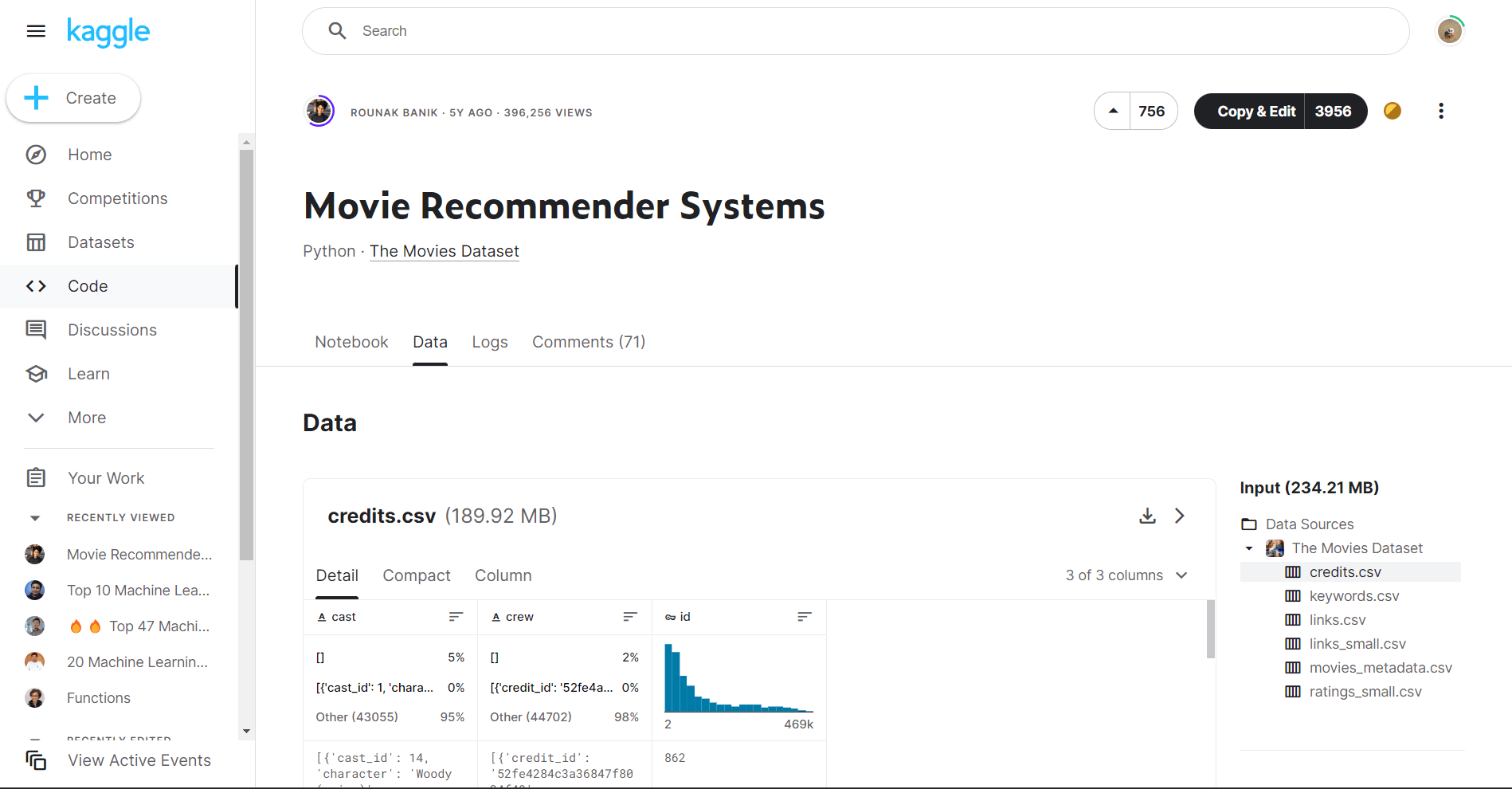

1. Movie Recommendations with Movielens Dataset

Nowadays, almost everyone streams movies and TV shows using technology. While deciding what to watch next can be difficult, suggestions are frequently given based on a viewer's past viewing habits and personal preferences.

Machine learning is used to accomplish this, making it a simple and enjoyable project for novices. Novice programmers can easily upgrade their skills by using the data from the Movielens Dataset with the help of Python or R programming. Movielens presently has more than 1 million movie ratings for 3,900 films that were created by more than 6,000 users.

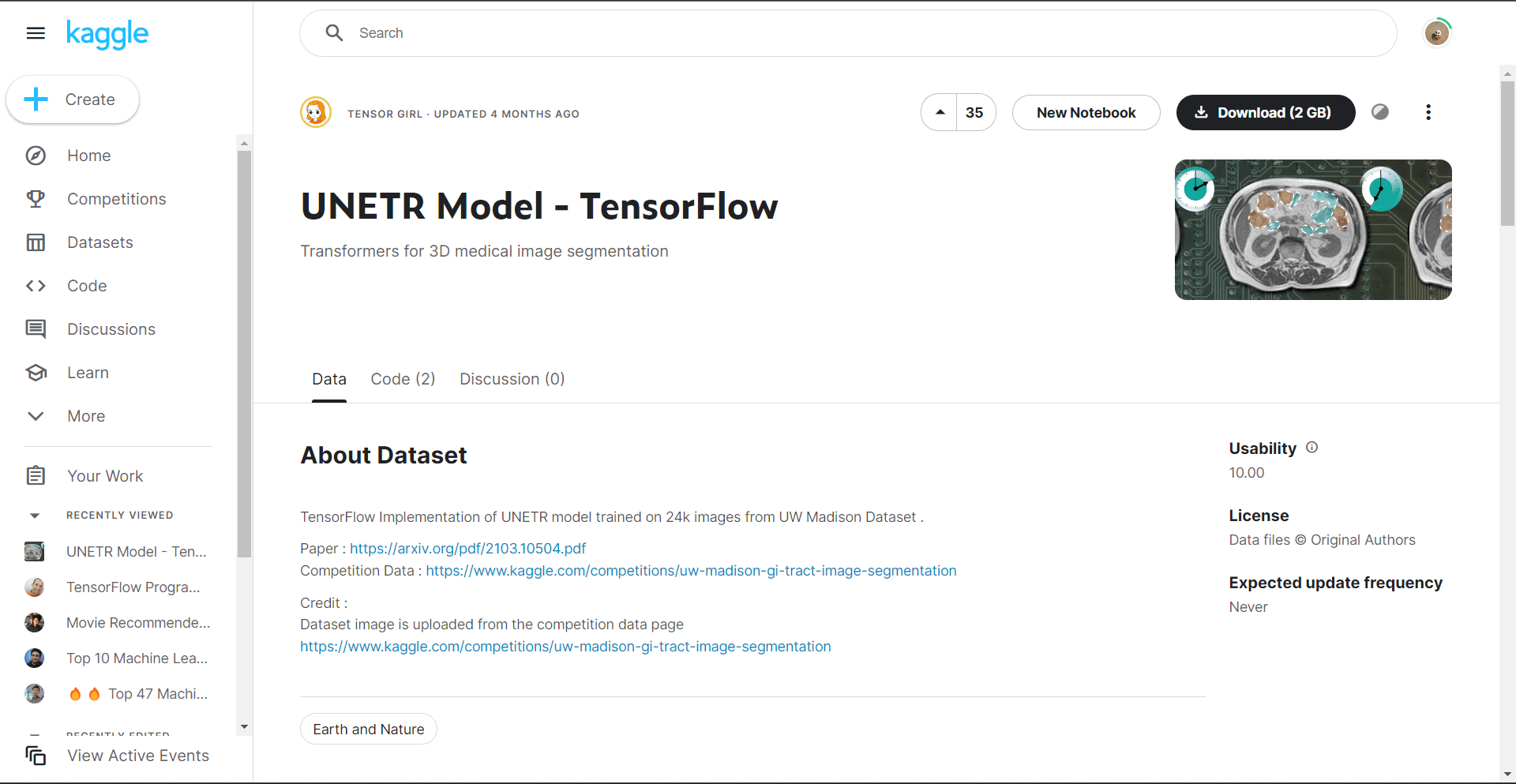

2. TensorFlow

The open-source artificial intelligence library is a great resource to learn machine for beginners. They can utilize TensorFlow to build Java projects, data flow graphs, and a variety of other applications. It also contains Java APIs.

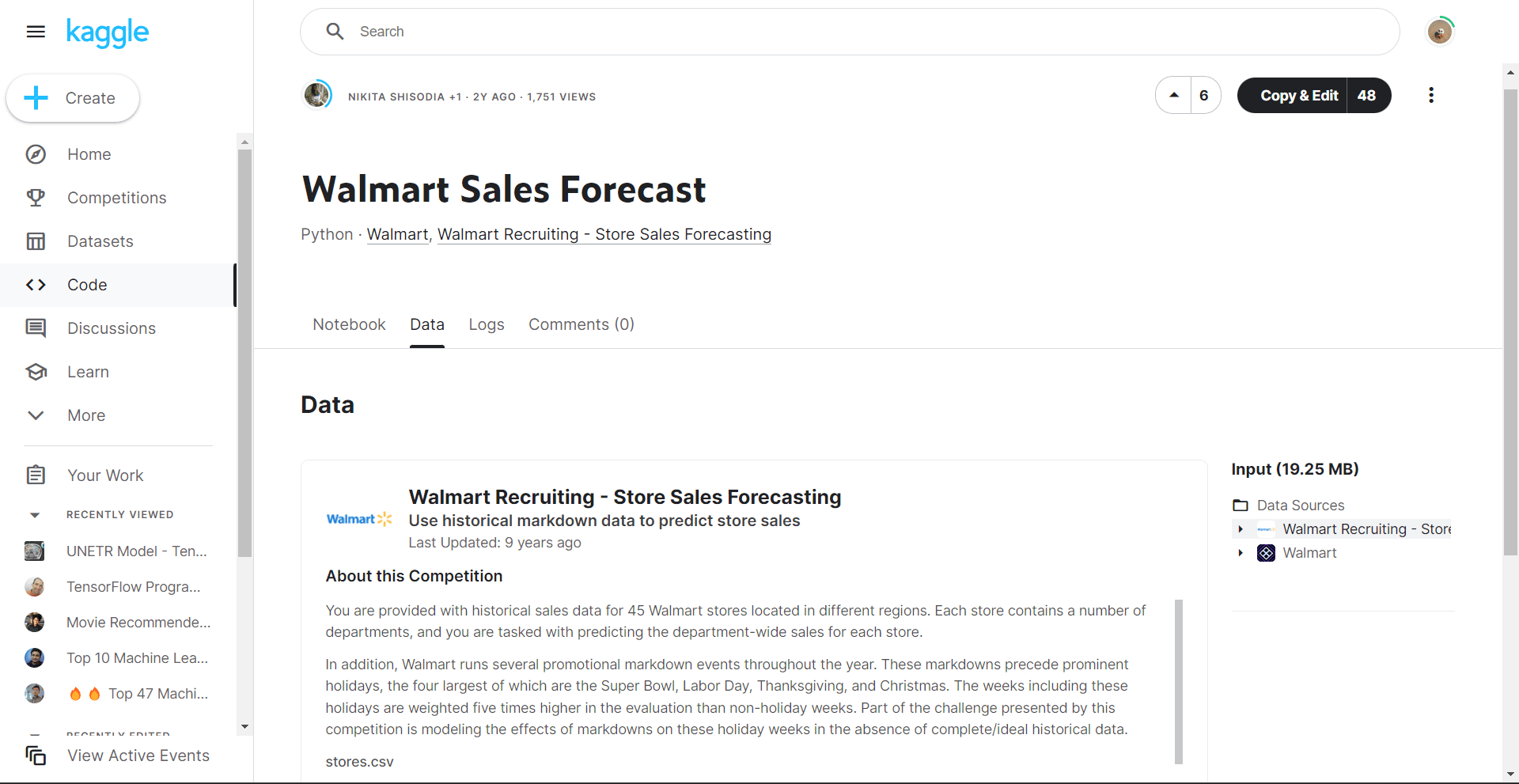

3. Sales Forecasting with Walmart

Businesses can come close to machine learning even though it may not be possible to predict future sales precisely. Using Walmart as an example, where developers can obtain data on weekly sales by locations and departments for 98 products across 45 stores.

Making better data-driven judgments for channel optimization and inventory planning is the main aim of a project of this scale.

4. Stock Price Predictions

The same data sets used for sales forecasting, volatility indices, and fundamental indicators are also used to make forecasts about stock prices. Beginners can start small project like this and make forecasts for the coming months using stock-market datasets.

It's a great way to get comfortable making predictions using large datasets. Download a stock market dataset from Quantopian or Quandl to get started.

5. Human Activity Recognition with Smartphones

Nowadays, a lot of mobile gadgets recognize the various activities like running, cycling, or jogging automatically.

The dataset that comprises fitness activity records for a few people was gathered using mobile devices equipped with inertial sensors, and is used by inexperienced machine learning engineers to experiment with this sort of project. Then, students may develop categorization models that can precisely forecast future actions. Additionally, it can aid in their comprehension of multi-classification problem-solving techniques.

6. Wine Quality Predictions

It might be difficult to find wines that you like while you're on wine shop. The Wine Quality Data Set provides the specifications that help in predicting quality, which is a pleasant machine learning experiment. This project gives ML newcomers practise with data exploration, data visualisation, regression modelling, and R programming.

7. Breast Cancer Prediction

This machine learning experiment makes use of a dataset that can predict whether a breast tumour is likely to be malignant or benign. The thickness of the lump, the proportion of naked nuclei, and mitosis are among the considerable variables. R programming training is a great approach for novice machine learning specialists to get started.

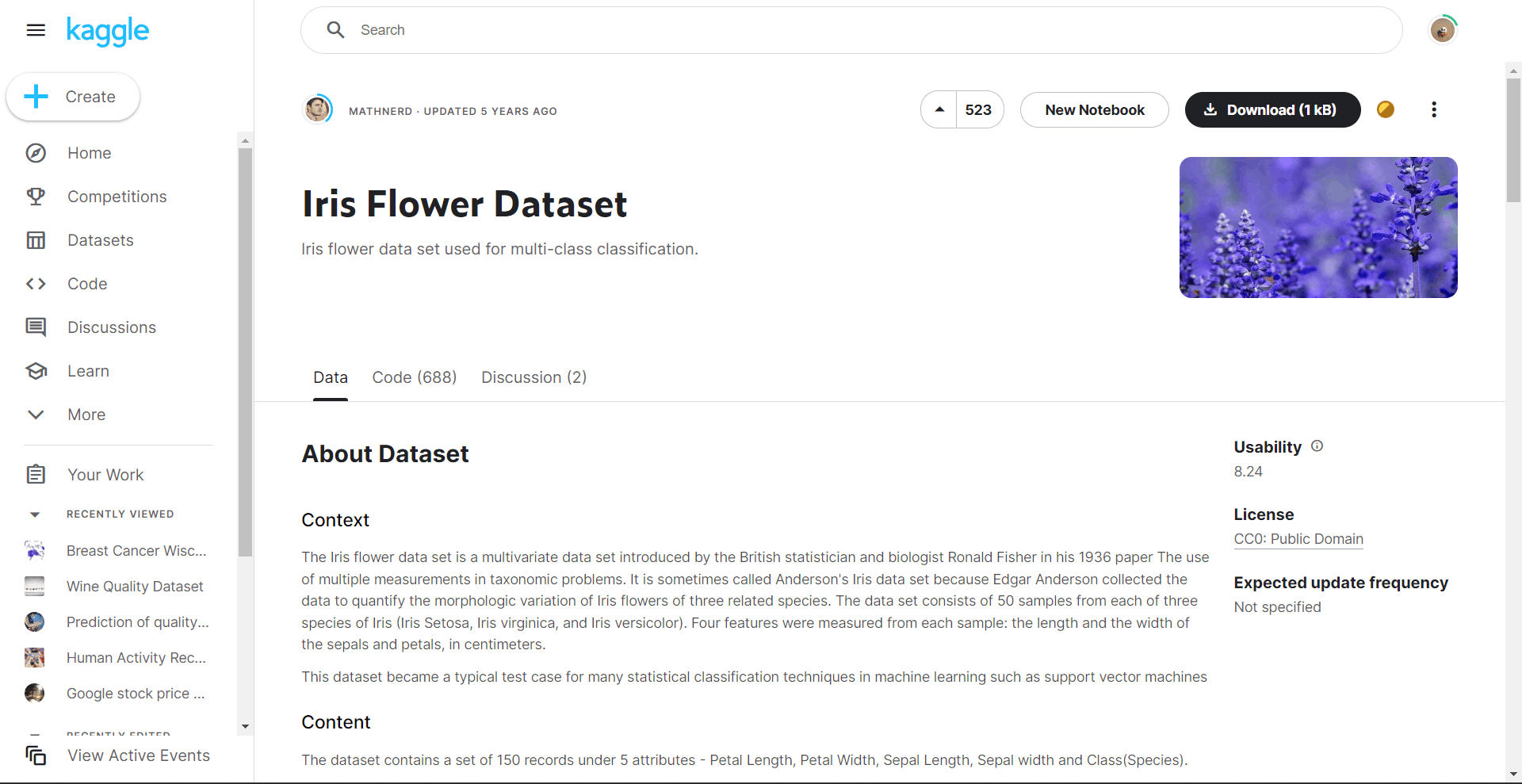

8. Iris Classification

One of the most well-known, earliest, and easiest machine learning tasks for beginners is the Iris Flowers dataset. Learners must master the fundamentals of managing numerical quantities and data as part of this assignment. The length and breadth of the sepals and petals are among the data points. A research that was successful sorted rises into one of three species using machine learning.

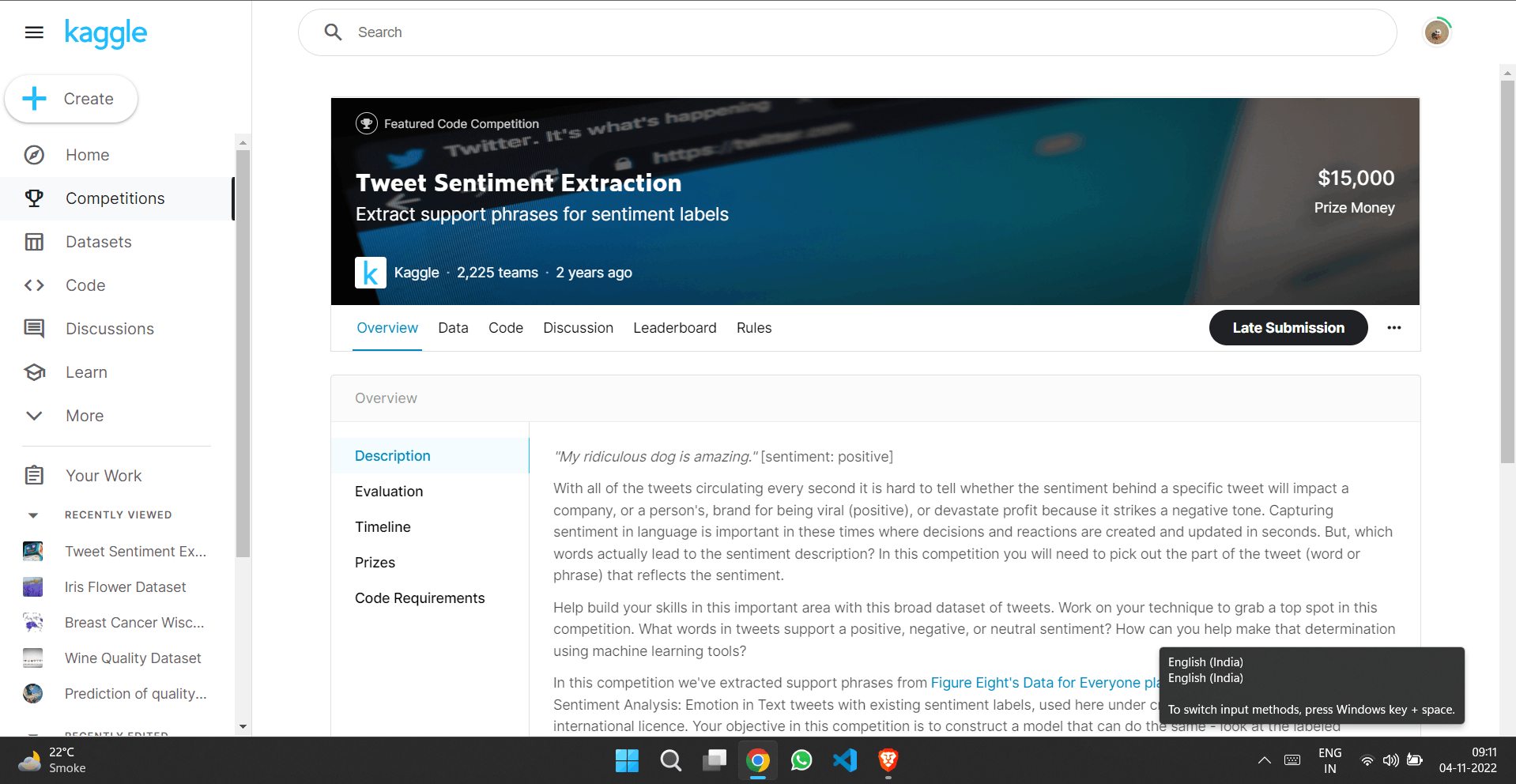

9. Sorting of Specific Tweets on Twitter

It would be wonderful to rapidly filter tweets that include particular terms and information. Fortunately, there is a beginner-level machine learning project that enables programmers to develop an algorithm that uses scraped tweets that have been processed by a natural language processor to identify which were more likely to fit to the particular topics.

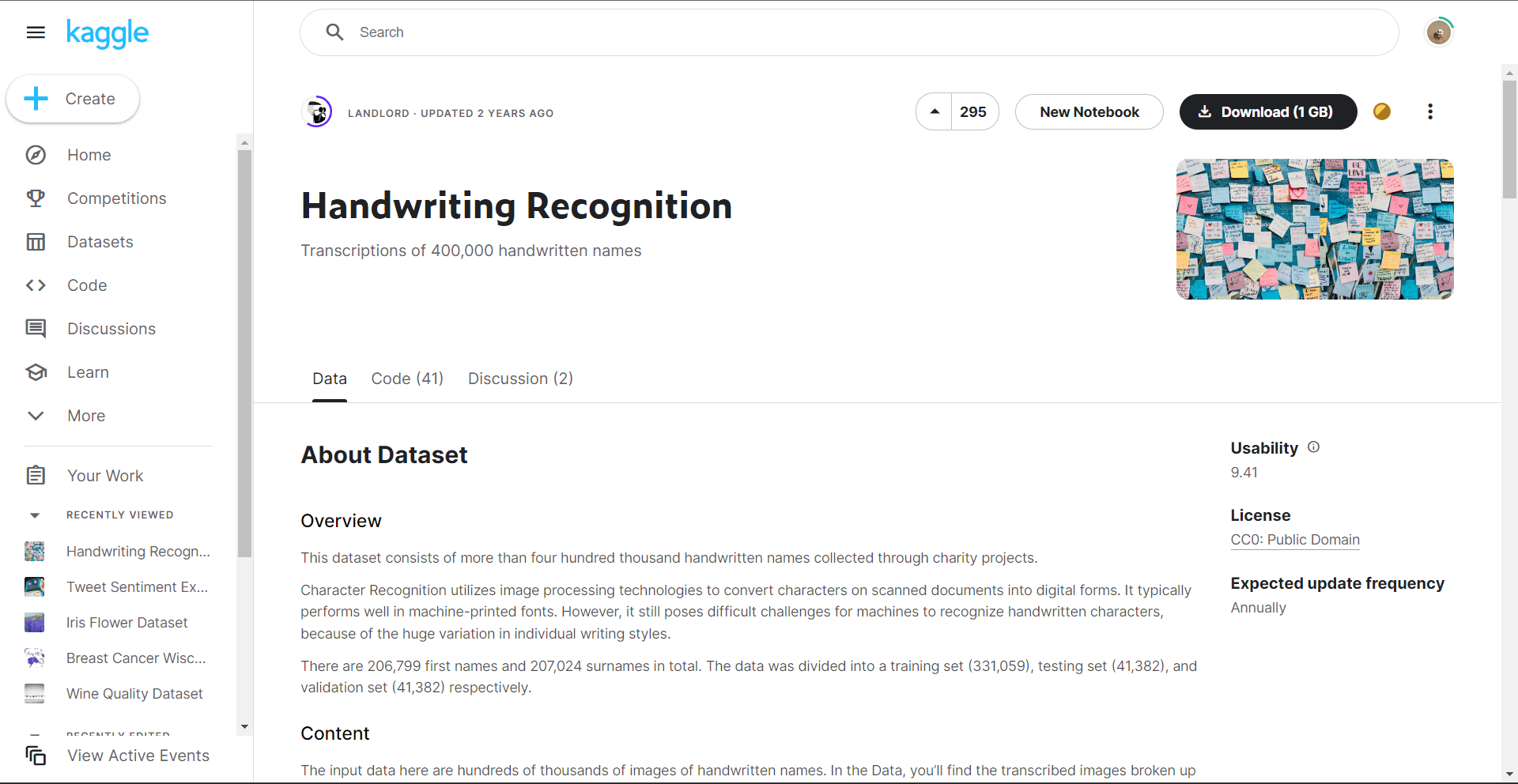

10. Turning Handwritten Documents into Digitized Versions

Deep learning and neural networks, two machine learning components are crucial for image identification, and are ideal for practice in this kind of project. Beginners may also learn how to use MNIST datasets, logistic regression, and how to convert pixel data into pictures.

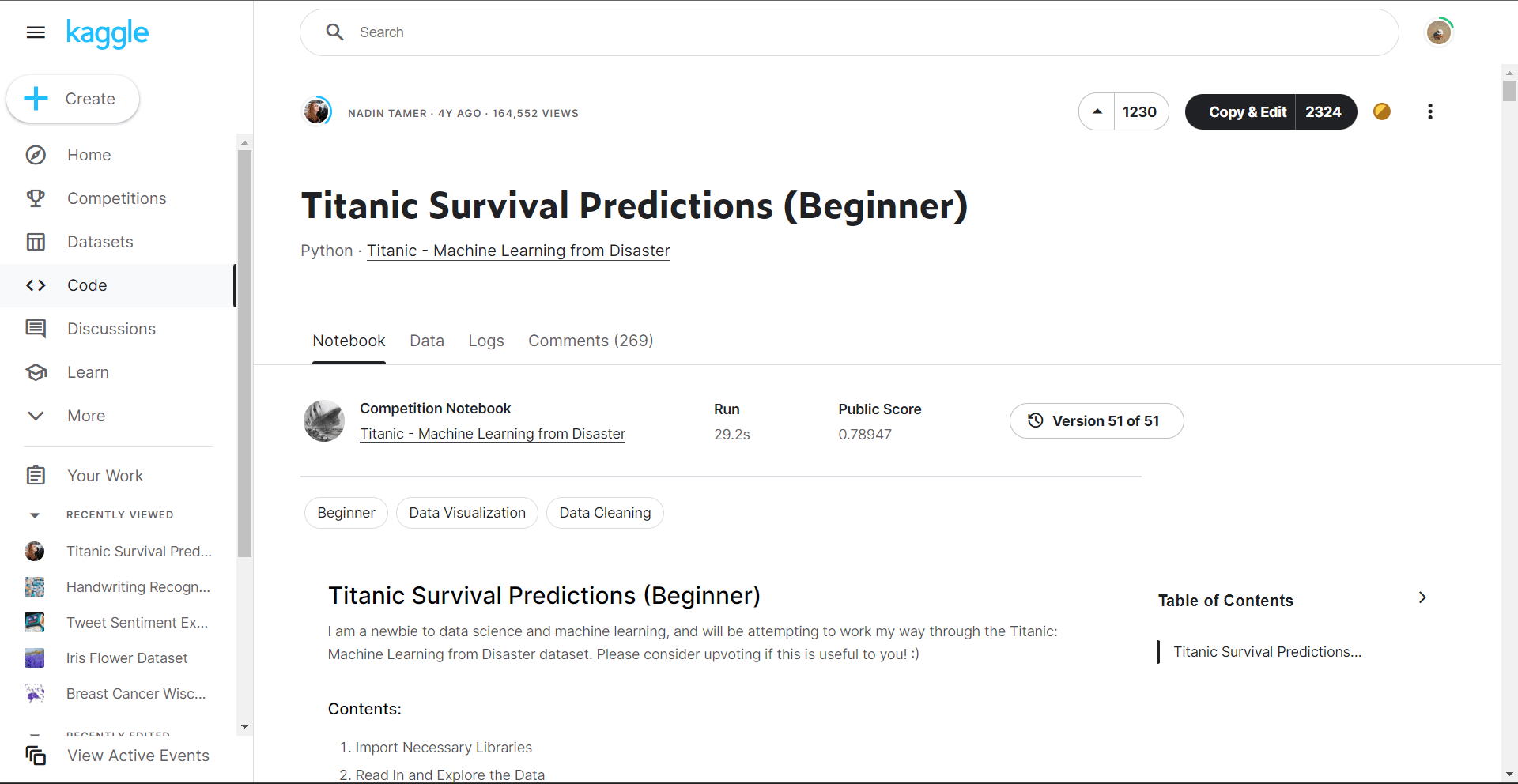

11. Kaggle Titanic Prediction

Passengers who boarded the Titanic are included in this dataset. It contains information about the gender, cabin, ticket price, and passenger age. You must make a prediction about the survivorship of these passengers using the given information.

Your machine learning model can be trained on a good, clean dataset.

12. Retail Price Optimisation

For those just getting started, working on this machine learning project is quite exciting. The retail sector's control over prices is the secret to corporate success. It is crucial to establish an acceptable pricing range and modify product prices in accordance with it. The first companies which uses this retail pricing optimization machine learning technology was Amazon.

Users can use resources to acquire training data. That data should be trained using the proper method.

Using this approach, the price optimization model may be created. The optimal model incorporates sales data, product characteristics, information about the product's structure, textual data, and pricing regulations. The algorithm generates an endless number of pricing options and, by taking into account millions of relationships inside a product, chooses the best price for a product in real-time.

13. Heart Disease Prediction

The 10-year risk of CHD serves as the independent variable. The goal variable is either 0 or 1 for individuals who never acquired heart disease and 1 for patients who did. It is a binary classification issue. On this dataset, feature selection may be used to determine which factors most influence heart risk. A classification model may then be fitted to the independent variables.

14. MNIST Digit Classification

The entry point into the field of deep learning is the MNIST dataset. This collection consists of handwritten numerals from 0 to 9 in grayscale graphics. It would be your responsibility to use a deep learning system to detect the digit. Ten different output classes are conceivable in this multi-class categorization task. A CNN (Convolutional Neural Network) may be used to carry out this categorization.

The Python-based Keras package is used to construct the MNIST dataset. Installing Keras, importing the library, and loading the dataset are required. There are roughly 60,000 photos in this dataset from them you may use 80% of them for training and the remaining 20% for testing.

15. Pima Indian Diabetes Prediction

This program will identify people who would develop diabetes based on factors including BMI, age, and insulin. Eight independent variables and one target variable make up the dataset of nine variables.

Since "diabetes" is the goal variable, you must predict either "1" or "0" depending on whether diabetes is present or not.

With the help of classification task you can test several classification models, such as logistic regression, decision tree classifiers, and random forest classifiers.

If you have little to no expertise with feature engineering, you should start with this dataset because all of the independent variables are numbers.

This dataset is available to novices on Kaggle. Online tutorials show how to code the answer in Python and R. These notebook lessons are a fantastic way.

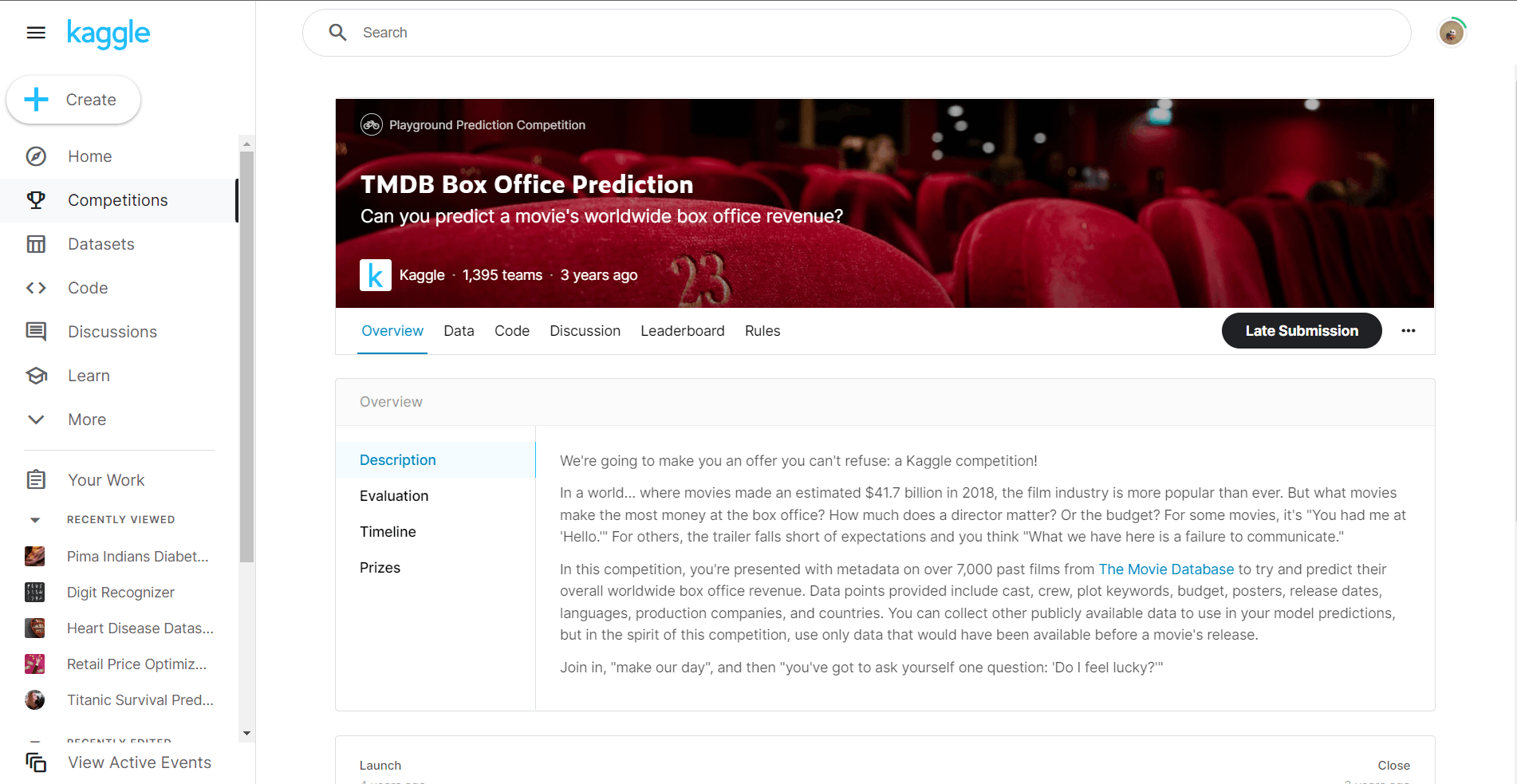

16. TMDB Box Office Prediction

There are data points available for the cast, staff, spending, languages, and release dates. The dataset consists of 23 variables, one of which is the target variable.

You may utilise a simple linear regression model as your foundational prediction model because it can produce an R-squared of above 0.60. Utilize strategies like XGBoost regression or Light GBM to attempt to surpass this score.