Vectorization in Machine Learning

Vectorization in machine learning refers to the process of converting data into arrays of numerical values, known as vectors. This is typically done to speed up the processing time of machine learning algorithms, as many of these algorithms are designed to operate on numerical data in vector form.

Vectorization is particularly important when working with large datasets, as non-vectorized algorithms can be slow and computationally expensive. By converting data into vectors, machine learning algorithms can operate on the data much more quickly and efficiently.

There are two main types of vectorization: data vectorization and algorithm vectorization.

- Data vectorization involves converting raw data into numerical vectors.

It is typically done using one of two methods:- one-hot encoding: One-hot encoding is used to convert categorical data into numerical vectors,

- mean normalization: mean normalization is used to scale numerical data so that it falls within a specific range.

- Algorithm vectorization involves modifying the algorithms themselves to operate on vectors. It is typically done using vectorized implementations of common machine learning algorithms, such as gradient descent and k-means clustering. These implementations are designed to operate on vectors rather than individual data points, resulting in faster processing times.

NumPy is one of the most widely used libraries for vectorization in machine learning. It is simple to vectorize data and algorithms thanks to the library's collection of functions for working with vectors and matrices.

Importance

Vectorization in machine learning is crucial for a number of reasons:

- Efficiency : Due to the fact that they make use of the parallel processing capability of contemporary CPUs and GPUs, vectorized operations are often performed significantly quicker than their non-vectorized equivalents. This can lead to significant speed-ups in training and inference.

- Memory : Vectorized operations can also reduce memory usage, as they avoid the need to store and process large amounts of data in a non-numerical format. This can be crucial for large-scale machine-learning jobs that need to handle lots of data.

- Simplicity : Vectorization can also simplify the code and make it more readable. It eliminates the need for explicit loops, making the code shorter and more intuitive.

- Compatibility : Some machine learning libraries, such as Tensorflow, Pytorch, etc., only support numerical data types. Vectorization ensures that the data is in a format that is compatible with these libraries.

Now, we will perform vectorization that will help accelerate mathematical operations for future uses.

Code

Importing Module

import numpy as np

import time

Creating Data

np.random.seed(1) # The outcome is consistent each time you use the other random function in Numpy.

# Now, let's build a few tiny arrays to show the differences.

small_x = np.random.rand(10000)

small_y = np.random.rand(10000)

# To show the difference, let's now construct enormous arrays.

large_x = np.random.rand(100000000)

large_y = np.random.rand(100000000)

Defining Dot Function

def my_dot_(x, y):

total_ = 0

for i in range(x.shape[0]):

total_ = total_ + x[i] * y[i]

return total_

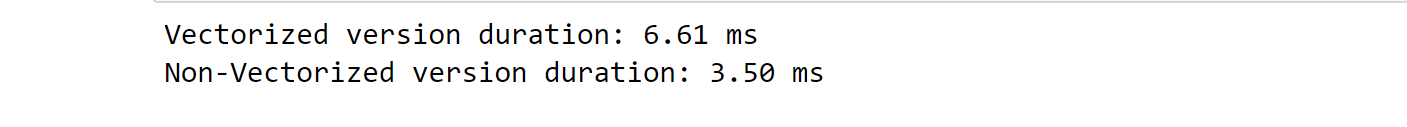

Comparing Small Difference

time_start = time.time() # Capturing Starting Time

np.dot(small_x, small_y)

time_end= time.time() # Capturing Ending Time

print(f"Vectorized version duration: {1000*(time_end-time_start):.2f} ms ")

time_start = time.time() # Capturing Starting Time

my_dot_(small_x, small_y)

time_end = time.time() # Capturing Ending Time

print(f"Non-Vectorized version duration: {1000*(time_end-time_start):.2f} ms ")

Output:

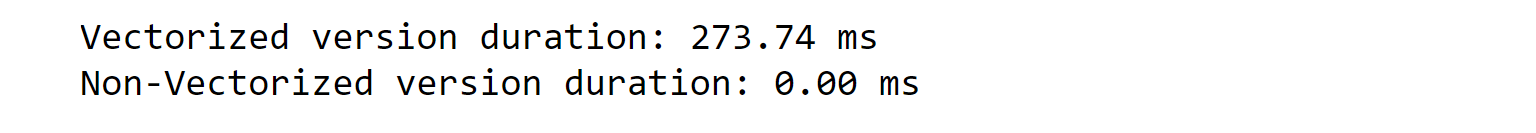

Comparing Large Difference

time_start = time.time() # Capture Starting Time

np.dot(large_x, large_y)

time_end = time.time() # Capture Ending Time

print(f"Vectorized version duration: {1000*(time_end-time_start):.2f} ms ")

time_start = time.time() # Capturing Starting Time

my_dot_(large_x, large_y)

end_time = time.time() # Capturing Ending Time

print(f"Non-Vectorized version duration: {1000*(time_end-time_start):.2f} ms ")

Output:

Vectorization provides a large speed-up in computation because libraries like NumPy take advantage of data parallelism.

This is critical in Machine Learning, where the datasets are often very large.

In summary, vectorization is a crucial technique in machine learning that involves converting data and algorithms into vectors. This allows machine learning algorithms to operate more quickly and efficiently, particularly when working with large datasets. With the help of libraries like NumPy, vectorization has become a very easy and efficient process in machine learning.