Principles of Preemptive Scheduling

In the world of computer science and operating systems, the concept of preemptive scheduling stands as a cornerstone that quietly shapes our digital lives. Preemptive scheduling transcends mere technical jargon; it embodies a living, breathing mechanism that governs how your computer allocates its precious resources and manages the execution of tasks. It is the indispensable beating heart of contemporary computing systems, ensuring that your digital experience is not just efficient but also responsive and adaptable to your needs.

At its core, preemptive scheduling is the art of task management, where the computer's operating system orchestrates a delicate balance among multiple processes, ensuring fairness, responsiveness, and efficient resource utilization. It's the reason why your computer can simultaneously run applications, manage system processes, and handle user interactions without grinding to a halt.

Historical Background

In the early days of computer systems, a different method, known as non-preemptive scheduling, prevailed. In this model, tasks had to voluntarily relinquish control of the CPU, often leading to suboptimal resource management. As computers evolved, there arose an unmistakable need for a more efficient and responsive approach.

Crucial milestones in the evolution of preemptive scheduling are intertwined with the development of multiprogramming systems, which started to take shape in the mid-20th century. Trailblazers of their time, luminaries such as John von Neumann and Grace Hopper, played a foundational role in shaping the landscape of modern computing.

Their visionary contributions included pioneering the revolutionary concept of multiple processes sharing a single central processing unit (CPU). This pivotal innovation marked a significant turning point in the evolution of computing, ultimately underpinning the sophisticated preemptive scheduling methods that have become integral to our present-day digital ecosystem.

Fast forward to the 1960s, and we see the emergence of time-sharing systems like the Compatible Time-Sharing System (CTSS). These systems allowed multiple users to interact with the computer simultaneously, introducing the concept of time slices, or quanta, which enabled the CPU to switch between processes a fundamental component of preemptive scheduling.

As time marched on, preemptive scheduling algorithms like Round Robin and Priority Scheduling became prominent, optimizing the allocation of resources. These innovations, alongside the growing capabilities of hardware, paved the way for the efficient and responsive operating systems we rely on in the present day.

Principles of Preemptive Scheduling

Preemptive scheduling isn't just a technical term; it's a dynamic method that governs how a computer system allocates and manages resources, making it a vital cog in the wheel of modern computing. This section delves into the foundational principles that guide preemptive scheduling, including its core objective, fundamental principles like task prioritization, time slicing, and context switching, and the pivotal role played by the scheduler in ensuring fairness and effective process management.

Defining Preemptive Scheduling and its Objective

Preemptive scheduling stands as a critical scheduling technique in the realm of operating systems and computer science. Its primary goal is to ensure equitable distribution of CPU time among processes, ultimately leading to improved system responsiveness, optimal resource utilization, and seamless multitasking. It accomplishes this by allowing the operating system to interrupt a running process and allocate the CPU to another based on predefined criteria.

Fundamental Principles of Preemptive Scheduling

- Task Prioritization: Within the realm of preemptive scheduling, each process is assigned a priority level. The scheduler chooses the process with the highest importance for execution, ensuring that critical or time-sensitive tasks are addressed promptly. The importance or urgency of each task can determine these priorities.

- Time Slicing (Quantum): Time slicing, often referred to as a quantum, is a pivotal principle that dictates how long each process can utilize the CPU before the scheduler switches to another. This technique prevents a single process from monopolizing the CPU, fostering fairness and responsiveness. Time slices are typically kept short, facilitating rapid context switching.

- Context Switching: Context switching is a fundamental process within preemptive scheduling. It involves saving the state of a running process, loading the state of another, and transitioning between processes. This ensures that each process can resume its execution without data loss or setbacks, facilitating the smooth operation of preemptive scheduling.

The Role of the Scheduler

At the heart of preemptive scheduling lies the scheduler, a critical component responsible for making decisions regarding which process should run next. The scheduler takes into account various factors, including process priorities, time slices, and real-time requirements, to make these decisions. Continuously monitoring the system's state, the scheduler plays a pivotal role in allocating CPU resources judiciously, executing tasks fairly, and maintaining system responsiveness, even in demanding conditions.

These principles collectively enable preemptive scheduling to manage multiple processes efficiently, ensuring an operating system that can handle diverse workloads, deliver rapid response times, and make the most of system resources. It's a linchpin in providing a seamless and efficient user experience across a variety of computing devices.

Preemptive Scheduling Algorithms

In this section, we'll explore a range of preemptive scheduling algorithms that play a pivotal role in managing processes within operating systems. Each algorithm comes with its unique approach, advantages, and limitations, catering to different use cases. We'll delve into the intricacies of five key preemptive scheduling algorithms and provide insights into their real-world applications.

1. Round Robin Algorithm

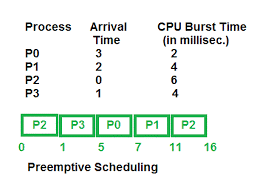

Round Robin is one of the most widely used preemptive scheduling algorithms. It allocates a fixed time quantum to each process, allowing it to execute circularly. This ensures that all processes get a fair share of CPU time. Round Robin is particularly effective in scenarios where fairness and responsiveness are essential. However, it may not perform optimally when tasks have varying execution times, leading to underutilization of the CPU.

2. Priority Scheduling

Within the Priority Scheduling algorithm, tasks are assigned distinct priority levels, and the scheduler gives precedence to the process with the highest priority for execution. This approach offers a great deal of versatility as it can accommodate various task-specific needs. Nevertheless, it comes with the potential drawback of "starvation," where lower-priority processes may be consistently postponed in favour of their higher-priority counterparts. This situation can arise if there is an overabundance of high-priority tasks, causing the lower-priority tasks to wait excessively or even indefinitely for their turn to utilize system resources.

3. Shortest Job First (SJF)

Shortest Job First prioritizes the execution of the process with the shortest burst time. This algorithm undervalues the average waiting time for processes, making it highly efficient. Nevertheless, it's challenging to predict the exact execution time for tasks in real-world scenarios, and frequent context switches can incur overhead.

4. Multilevel Queue Scheduling

Multilevel Queue Scheduling categorizes processes into multiple priority queues, each with its scheduling algorithm. This approach is ideal for managing processes with varying levels of importance or time sensitivity. For instance, a real-time task may have a higher priority queue than background tasks. While this system promotes task categorization, it can be complex to manage and configure.

5. Lottery Scheduling

Lottery Scheduling introduces an element of randomness by assigning each process a certain number of "lottery tickets." The scheduler then draws a ticket at random to determine the next process to execute. This method provides a degree of fairness and unpredictability, making it useful for scenarios where all processes should have a chance to run. However, it may not guarantee consistent performance for critical tasks.

Real-World Applications

- Round Robin is commonly used in desktop operating systems and web servers, where responsive multitasking is vital.

- Priority Scheduling finds applications in real-time systems where certain tasks require immediate attention.

- Shortest Job First is applied in batch processing systems and scientific computing, where task durations are predictable.

- Multilevel Queue Scheduling is utilized in enterprise environments where tasks have varying levels of priority, such as in a server with both background and user-initiated processes.

- Lottery Scheduling is seen in distributed systems and cloud computing, allowing for random allocation of resources to maintain fairness.

Implementation and Challenges

The effective implementation of preemptive scheduling in operating systems involves a careful consideration of various technical aspects. It's not just about selecting the right algorithm; it's about designing a system that can efficiently manage multiple processes in a dynamic environment.

Technical Aspects of Implementation

Implementing preemptive scheduling necessitates a robust kernel that can handle context switches efficiently. The kernel is responsible for maintaining the state of each process, enabling smooth transitions between them. To achieve this, the kernel employs data structures like process control blocks (PCBs) to store vital information about each process.

Challenges and Trade-Offs

System designers grapple with several challenges when implementing preemptive scheduling. One significant challenge is striking the right balance between responsiveness and overhead. While preemptive scheduling ensures fair task execution, frequent context switches can lead to increased overhead and may affect system performance. Another challenge is handling priority inversion, where lower-priority processes inadvertently delay higher-priority tasks due to resource contention.

Synchronization and Deadlocks

To mitigate these challenges, preemptive scheduling systems often employ synchronization mechanisms such as semaphores, mutexes, and condition variables. These tools help in managing access to shared resources, ensuring that processes don't interfere with each other in an undesirable way.

Furthermore, deadlocks are a critical concern in preemptive scheduling environments. A deadlock occurs when multiple processes are incapable of proceeding because each is waiting for a resource held by another. Techniques like deadlock detection and prevention, as well as resource allocation graphs, are used to address this issue and ensure that the system can recover gracefully from such scenarios.

Real-World Applications

- Preemptive scheduling is not confined to theory; it finds extensive application in real-world scenarios and diverse industries. In the realm of modern computing, preemptive scheduling is a linchpin for ensuring efficient and responsive system operation.

- This scheduling paradigm is crucial in cloud computing, where multiple users share resources concurrently. It's equally vital in embedded systems that power everything from smart home devices to industrial automation. In the mobile device domain, preemptive scheduling guarantees seamless multitasking on smartphones and tablets.

- Prominent operating systems, such as Windows, Linux, and macOS, all incorporate preemptive scheduling to manage processes and ensure that users experience swift and reliable performance, exemplifying the widespread real-world relevance of this concept.

Advantages and Disadvantages

- Preemptive scheduling offers a range of advantages, including enhanced system responsiveness, fair resource allocation, and the ability to handle multitasking efficiently. However, it comes with certain drawbacks, such as increased context switch overhead and potential priority inversion issues.

- Choosing the right scheduling algorithm is paramount. Round Robin, for instance, prioritizes fairness and responsiveness, making it suitable for desktop systems. In contrast, Priority Scheduling is ideal for real-time applications. The key is to align the scheduling method with the specific requirements of the use case, mitigating potential disadvantages and harnessing the advantages to their fullest potential.

Conclusion

In conclusion, our exploration of preemptive scheduling has unveiled a fundamental concept that underpins the efficient functioning of operating systems. Preemptive scheduling, with its range of algorithms and principles, ensures fair task execution, responsiveness, and optimal resource allocation. From Round Robin to Priority Scheduling and more, these scheduling strategies cater to specific use cases. While preemptive scheduling offers unparalleled advantages, it's essential to choose the right algorithm to align with the particular requirements of the system. Preemptive scheduling is the invisible hand that keeps our digital world running seamlessly, making it a cornerstone in the world of operating systems.