Asymptotic Notation

Asymptotic notation is expressions that are used to represent the complexity of algorithms. The complexity of the algorithm is analyzed from two perspectives:

- Time complexity

- Space complexity

Time complexity

The time complexity of an algorithm is the amount of time the algorithm takes to complete its process. Time complexity is calculated by calculating the number of steps performed by the algorithm to complete the execution.

Space complexity

The space complexity of an algorithm is the amount of memory used by the algorithm. Space complexity includes two spaces: Auxiliary space and Input space. The auxiliary space is the temporary space or extra space used during execution by the algorithm. The space complexity of an algorithm is expressed by Big O (O(n)) notation. Many algorithms have inputs that vary in memory size. In this case, the space complexity that is there depends on the size of the input.

Different types of asymptotic notations are used to describe the algorithm complexity.

- O? Big Oh

- ?? Big omega

- ?? Big theta

- o? Little Oh

- ?? Little omega

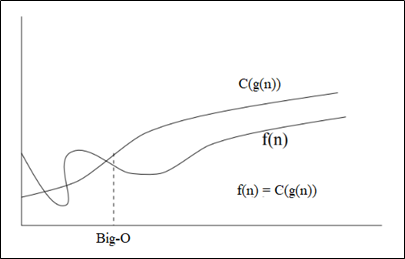

O- Big Oh: Asymptotic Notation (Upper Bound)

"O- Big Oh" is the most commonly used notation. Big Oh describes the worst-case scenario. It represents the upper bound of the algorithm.

Function, f(n) = O (g(n)), if and only if positive constant C is present and thus:

0 <= f(n) <= C(g(n)) for all n >= n0

Therefore, function g(n) is an upper bound for function f(n) because it grows faster than function f(n).

The value of f(n) function always lies below the C(g(n)) function, as shown in the graph.

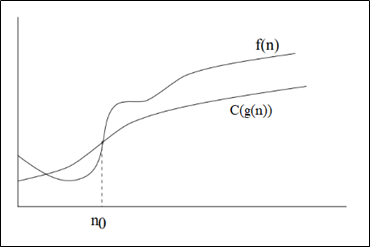

?-Big omega: Asymptotic Notation (Lower Bound)

The Big Omega (?) notation describes the best-case scenario. It represents the lower bound of the algorithm.

Function, f(n) = ? (g(n)), if and only if positive constant C is present and thus:

0 <= C(g(n)) <= f(n) for all n >= n0

The value of f(n) function always lies above the C(g(n)) function, as shown in the graph.

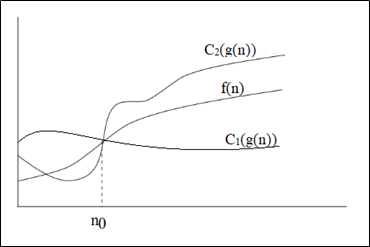

?-Big theta: Asymptotic Notation (Tight Bound)

The Big Theta (?) notation describes both the upper bound and the lower bound of the algorithm. So, you can say that it defines precise asymptotic behavior. It represents the tight bound of the algorithm.

Function, f(n) = ? (g(n)), if and only if positive constant C1, C2 and n0 is present and thus:

0 <= C1(g(n)) <= f(n) <= C2(g(n)) for all n >= n0

o-Little Oh: Asymptotic Notation

The Little Oh (o) notation is used to represent an upper-bound that is not asymptotically-tight.

Function, f(n) = o (g(n)), if and only if positive constant C is present and thus:

0 <= f(n) < C(g(n)) for all n >=n0

The relation, f(n) = o(g(n)) implies that limn-? [ f(n) / g(n)] = 0.

??Little omega: Asymptotic Notation

The Little omega (?) notation is used to represent a lower-bound that is not asymptotically-tight. Function, f(n) = ?(g(n)), if and only if positive constant C is present and thus:

0 <= C(g(n)) < f(n) for all n >=n0

The relation, f(n) = ?(g(n)) implies that limn-? [ f(n) / g(n)] = ?.