Who invented Computer?

Who invented Computer?

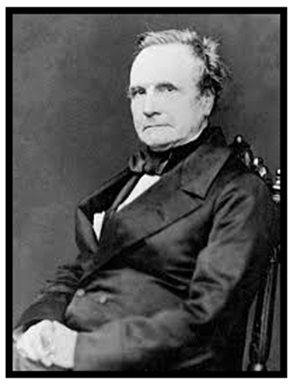

I know this is the vert standard question which we have learned at standard 2. But, it’s not a surprise that most of the people fail to answer this question. The answer to this simple question isn’t straightforward or direct as one thinks it could be. There could be an endless debate stating that the Abacus or its descendant instrument (used to calculate sums), the slide rule invented by William Oughtred in 1622, was the first designed computer devices. On the other hand, in 1837, a British mathematician named Charles Babbage is recognized for the first electronic computer's invention and is entitled to the computer's father. The evolution of the computer started between 1833 and 1871, where the first analytical machine was invented. The features and prototype resemble the current devices that the entire world is using every day.

Charles and the Difference engine

Traveling back in history, before the period when Charles Babbage has invented the world’s precious, the computer wasn’t an electronic device and wasn’t capable of performing on its own. The computer was referred to as an active and living person. At that time, the definition of computer was completely different from that we know today. It was a job title given to human mathematicians who premeditated problems with papers, books and tables. A human (or computer) was responsible for managing the entire computer’s task of calculating arithmetic and entering them into a table.

The invention of a prodigious device involves tons of people directly or indirectly. Another man inspired Charles Babbage's invention was Napoleon Bonaparte. In 1790, he proposed an idea for swapping the structure of measurements from imperial to metric. It helped masses of human computers convert all the created data quickly in the form of tables—this saved time and labor.

Babbage got a kick after reviewing the unpublished manuscript containing Bonaparte metric tables. But these tables still made mistakes, which infuriated Babbage and triggered him to discover an impeccable device to solve the problems faster with less human intervention and reduced error factor. He straight away started working on its idea and vision for computer machines and seek government funding to make his dream come true. After eight years of perpetual efforts and determination work, in 1824, he invented his prototype popularly known as a difference engine.

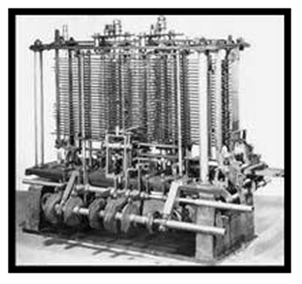

Difference Engine was capable of computing data for polynomial functions. But, Charles never finished the complete machine, probably because in the meanwhile, his attention had moved on to developing a far more sophisticated thing.

Charles and the Analytical engine

Though the Difference Engine set a revolution, Babbage didn’t settle with this and planned something even more significant. He continuously worked further, made repetitive alterations and worked another few years to fulfill his vision and designed machine- the analytical engine. This invention was capable of completing complex tasks at speed and without errors.

The analytical engine concept further evolved and used a programming language centered on the punch card used to control looms. In 1833, when Charles started and interestingly in the same year, he met “Augusta Ada Byron King” (countless of Lovelace), the daughter of the poet Lord Byron. She was 17 years old, already a mathematician and very interested in Babbage’s ideas. In the following years, Babbage worked on the Analytical Engine design and developed 28 different plans for it. Lovelace was profoundly convinced and optimistic about the analytical engine and saw the potential of his invention. She wrote several programs for the theoretical machine. That’s why many people see her as the very first programmer! She further proposed that this machine could help other things besides mathematical and arithmetic calculations, unlike composing music.

The two primary concepts and features are still used on a modern computer. It had a central processing unit or CPU (also known as the brain of the computer), which he called mill and memory, which he called the store (so data can be stored on it). Charles further introduced an instruction reader device that is used to fetch instructions and generate outputs on paper. Babbage named that device as a printer that we still use today. Babbage’s concepts were brilliant, and he was probably 100 years ahead of his time.

On the contrary, Charles only created a trial model of the Analytical Engine and documented his ideas in about 1000 Notations and 7000 Manuscripts. Thus, his ideas were theoretical and existed only in black and white (on paper) and faded into obscurity because his invention was far beyond the technology that existed at the time. Else the entire world might have experienced the computer revolution of the 1950s a century earlier. In 1991, Charles's dream came into reality, and the world’s first computer was built in the Science Museum in London, England using his specifications. The computer mounted at an incredible seven feet of height, weighing seven tons. As science and technology-enhanced, the device got smarter, compact, faster and has become the shape of modern computers that we use today.