What is Parallelization?

Parallelization means doing multiple tasks simultaneously (in parallel) to accomplish some goal. According to Computational Science, developing a computer program or system to process data-parallel is known as parallelization or Parallel processing. For many years, parallelization has been employed as a computing approach, especially in supercomputing.

A computer program or system that uses parallelism divides a problem into smaller chunks so that each can be independently solved simultaneously by separate computing resources. Typically, computer programs serially process data, resolving one issue before moving on to the next. Parallelized programs can conclude significantly more quickly than programs running processes in serial mode when optimized for this type of computing.

In a parallel processing application, tasks are broken down into smaller subtasks and processed in what appears to be parallel processing. Parallelism causes the central processing units and input-output tasks of one process to overlap with those of another process's central processing unit and input-output duties. This sort of computing, also known as parallel computing, occurs when the central processing units and input-output responsibilities of one process overlap with those of another. By utilizing many processors, it is used to boost the system's throughput and computing performance.

History of Parallelization in Computing

While breakthroughs in supercomputer technology first surfaced in the 1960s and 1970s, interest in parallel computing began to grow in the late 1950s. These multiprocessors executed parallel computations on a single data set while utilizing shared memory space. A new type of parallel computing was invented in the 1980s when a supercomputer was constructed for scientific applications. With microprocessors readily available in the general market, this system showed that excellent performance was possible. In 1997, a supercomputer exceeded the threshold of one trillion floating point operations per second, taking over high-end computing.

Parallel processing is crucial for enhancing computing performance as individual chips reach maximum speeds. Most contemporary desktop and laptop computers contain several CPU cores, which aid operating system parallel processing. A significant engineering challenge in CPU design is how each new generation of processors will interact with the physical constraints of microelectronics.

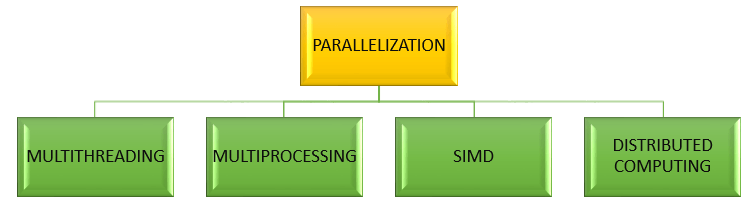

Types of Parallelization

1) Types of Parallelization in Hardware(also called Parallel Processing)

- Single Instruction, Single Data (SISD): In the computing paradigm known as Single Instruction, Single Data, a single processor is in charge of managing a single algorithm as a single data source (SISD). It resembles the serial computer we have today. SISD executes commands sequentially, and depending on its setup, it may or may not be able to process instructions in parallel.

- Multiple Instruction, Single Data (MISD): Each processor has a different algorithm but uses the same input data. The same batch of data can be subjected to numerous procedures on MISD systems.

- Single Instruction, Multiple Data (SIMD): SIMD (Single Instruction, Numerous Data) architecture-based computers contain multiple processors that execute the same instructions. However, each processor provides its distinct data set for the instructions. SIMD computers use the same approach on various data sets.

- Multiple Instruction, Multiple Data (MIMD): Computers that support several processors, each of which can independently receive its instruction stream, are known as MIMD, or multiple instructions, multiple data, computers. There are several processors in these machines.

- Single Program, Multiple Data (SPMD): Even though an SPMD computer is built similarly to a MIMD, every processor is in charge of processing the same instructions. It is a subset of the MIMD system.

- Massively Parallel Processing (MPP): The coordinated execution of program activities by several processors is controlled by a storage structure known as Massively Parallel Processing (MPP). This coordinated processing can be applied to various program parts using each CPU's operating system and memory.

2) Types of Parallelization in Software(also called Parallel Computing)

- Instruction-level parallelism: The simultaneous execution of several instructions from a program is known as instruction-level parallelism. Although pipelining is a type of ILP, we must use it to our advantage to execute the instructions in the instruction stream in parallel.

- Bit-level parallelism: Increasing processor word size is the foundation of the parallel computing technique known as bit-level parallelism. Increasing the word size in this kind of parallelism minimizes the number of instructions the processor needs to carry out to operate on variables whose sizes are larger than the word length.

- Task Parallelism: Task parallelism divides tasks into smaller tasks and then assigns each task to a separate worker for execution. Concurrently, the processors carry out the subtasks.

- Data Parallelism: Instructions from one stream are concurrently applied to many data streams. Memory bandwidth and irregular data manipulation patterns place restrictions.