What is a bit (Binary Digit)?

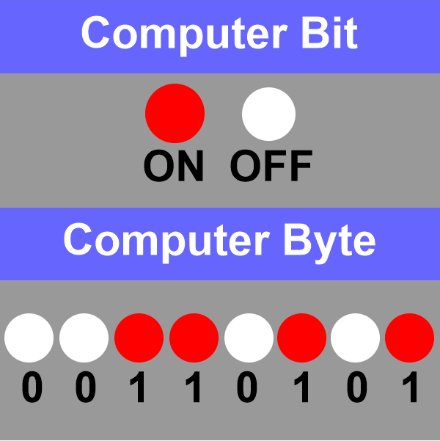

A bit is the short form of Binary digit, which is the basic unit of information in computing and digital communications. It is a binary value, meaning it can have only one of two possible states: 0 or 1. These two states are often referred to as "low" and "high," "off" and "on," or "false" and "true."

Bits represent all types of data in a computer, from simple numbers and characters to more complex data such as images and videos. The amount of data a bit can represent is very small, so bits are often combined into groups of 8 or more to represent larger amounts of data. For example, 8 bits make up a byte, 16 bits make up a word, and so on.

In digital communications, bits represent the data transmitted over a network or other communication medium. In this context, a series of bits can represent a character, a word, or a message. The speed at which bits can be transmitted is measured in bits per second (bps) or bytes per second (Bps).

In computing, bits are also used to perform operations on data. The most basic operations are the logical operations, such as AND, OR and NOT, served on individual bits. These operations are performed using electronic circuits called logic gates. These basic operations form the basis of more complex operations and form the foundation of digital logic design.

It's also important to note that a bit is not just limited to 0 or 1 in some fields like quantum computing; there are more future representation possibilities.

Binary Number System in BIT

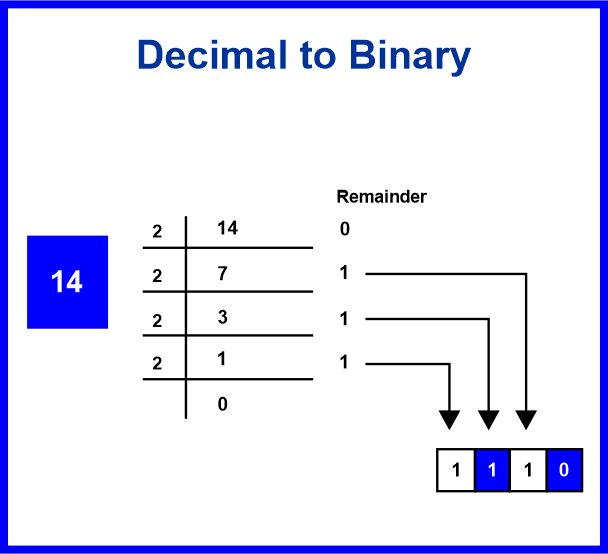

In the binary number system, all values are represented using only two digits: 0 and 1. This is in contrast to the decimal number system, which operates ten digits (0 through 9) and the hexadecimal number system, which uses 16 digits (0-9, A-F).

Each digit in a binary number represents a power of 2. The rightmost digit is the least significant bit (LSB) and represents 2^0 (or 1), the next digit to the left represents 2^1 (or 2), and so on. For example, the binary number 10101 represents the decimal value 12^4 + 02^3 + 12^2 + 02^1 + 12^0 = 116 + 08 + 14 + 02 + 11 = 16 + 4 + 1 = 21.

Because it uses only two digits, the binary number system is particularly well-suited to digital computers, which use electronic circuits in one of only two states: on or off, high or low, 1 or 0. By representing data in binary form, computers can use these two states to represent any value.

Binary numbers represent many computer data types, including integers, floating-point numbers, and characters. Converting a decimal number to a binary and vice versa is called a binary-decimal conversion.

It's also important to note that the binary number system forms the foundation for other numbering systems like octal and hexadecimal, which group the binary digits into sets of 3 and 4, respectively, and then assign a new symbol to represent each set.

BIT Operations

BIT operations, also known as bitwise operations, are basic operations that can be performed on individual bits of data in a computer. They are used to manipulate and extract data at the bit level. They often represent instructions and address memory in low-level programming and machine-level operations.

Here are some of the most common bit operations:

1) AND: This operation compares each bit of the first operand to the corresponding bit of the second. If both bits are 1, the corresponding result bit is set to 1; otherwise, the result bit is set to 0.

2) OR: This operation compares each bit of the first operand to the corresponding bit of the second operand. If either bit is 1, the corresponding result bit is set to 1.

3) NOTE: This operation flips each bit of the operand. A 0 becomes 1, and a one becomes 0.

4) XOR (Exclusive OR): This operation compares each bit of the first operand to the corresponding bit of the second operand. If the bits are different, the corresponding result bit is set to 1.

5) Left-shift: It shifts the bits of an operand to the left, discarding the bits shifted out and zero-filling the bits moved in.

6) Right-shift: It shifts the bits of an operand to the right, discarding the bits shifted out and zero-filling the bits moved in.

7) Bitwise masking: It isolates specific bits from the number by AND operation with a mask to extract information or to set specific bits as 0 or 1

8) Bitwise set: It sets specific bits as one by OR operation with a mask.

These operations are typically represented using special bitwise operators in programming languages such as & for AND, | for OR, ~ for NOT and ^ for XOR. It is important to note that these operations can be performed on any data type in most programming languages, but the result will be an integer. Also, the size of the resulting integer can be different based on the language and the platform.

Data Representation in BIT

A bit (short for binary digit) is a computer's basic data storage unit in computer science. A single binary number (0 or 1) represents data in a digital system. A group of bits, typically 8 or 16, is often used to describe larger data units, such as characters or integers. Data can also be represented in larger units, such as bytes, words, or double words, which are made up of multiple bits. Data can be expressed in different formats, such as ASCII (American Standard Code for Information Interchange), Unicode, and binary-coded decimal (BCD).

Data Compression in BIT

Data compression is a technique to reduce the amount of data needed to represent a given information. In the context of bits, data compression can be achieved by removing redundant or unnecessary bits from a data stream. This can be done through techniques such as lossless and lossy compression.

Lossless compression techniques, such as Huffman coding, use algorithms that can compress data without losing any information. These techniques are reversible, meaning that the original data can be reconstructed exactly from the compressed data.

Lossy compression techniques, such as JPEG compression for images, remove some of the data deemed less important. These techniques are not reversible, meaning that the original data cannot be reconstructed exactly from the compressed data.

Data compression can also be achieved by simply reducing the number of bits used to represent a piece of data. For example, instead of using 32 bits to represent an integer, we can use only 8 bits if we know that the number will never be greater than 255.

It is worth noting that not all data is compressible, some data is already compressed or random, and in some cases, the compression process may even increase the size of the data.

Error Detection and Correction in BIT

Error detection and correction refers to techniques used to identify and correct errors that may occur during the transmission or storage of data. In the context of bits, errors can occur due to noise or interference on a transmission channel or hardware or software failures.

Error detection is the process of identifying errors in the data. One common method for error detection is redundancy, where extra bits are added to the data to allow the mistakes to be detected. For example, a checksum, a simple form of redundancy, is often added to a message to detect errors. Another example is a cyclic redundancy check (CRC), a more robust error detection form.

Error correction is the process of correcting errors that have been detected. One common method for error correction is forward error correction (FEC), where redundant data is added to the original data to allow errors to be corrected. For example, hamming codes, a simple form of forward error correction, can be used to correct single-bit errors. Another example is the Reed-Solomon code, a more powerful form of error correction that can correct multiple errors.

In some cases, error detection and correction may also be achieved by using a computer system's error-correcting codes (ECC) memory. ECC memory uses extra bits to detect and correct errors that may occur in the data stored in memory.

It is worth noting that error detection and correction techniques can add overhead to the data and may only be necessary for some situations. The choice of whether to use error detection and correction and which method to use depends on the specific application and the cost of errors.

Error-Correcting Code in BIT

Error-correcting codes (ECC) are a technique used to detect and correct errors that may occur in digital data during transmission or storage. In the context of bits, ECCs add redundant information to the original data, allowing errors to be detected and corrected.

There are two main types of ECCs:

1) Forward error correction (FEC): This type of ECC adds redundant information to the original data, allowing errors to be corrected without retransmission. Examples of FEC codes include Hamming codes, Reed-Solomon codes, and BCH codes.

2) Automatic Repeat Request (ARQ): This type of ECC uses feedback from the receiver to detect errors and request the retransmission of data if errors are detected. Examples of ARQ protocols include Stop-and-wait ARQ and Go-Back-N ARQ.

One of the most widely used ECC is Reed-Solomon codes, a type of forward error correction code that can correct multiple errors and detect them with high probability. They are commonly used in many applications, such as digital television, digital storage, and satellite communications.

ECC can be used in different layers of communication protocols; it can be used in the link layer, the network layer, or the transport layer. The choice of which ECC to use depends on the specific application and the cost of errors.

It is worth noting that ECCs add overhead to the data and can increase the system's complexity. Also, not all errors can be corrected and depending on the specific implementation, ECCs may not be able to fix all errors that occur.

Networking in BIT

Networking in the context of bits refers to the communication of data between different devices or systems using a network. A network is a collection of interconnected devices that can communicate with each other to exchange data and share resources.

There are different types of networks, including:

1) Local Area Networks (LANs): These networks connect devices within a small geographic area, such as a home or office.

2) Wide Area Networks (WANs): These networks connect devices across a larger geographic area, such as a city or country.

3) Metropolitan Area Networks (MANs): These networks connect devices within a metropolitan area.

4) Global Area Networks (GANs): These networks connect devices globally, such as the internet.

Networking in bits is based on transmitting digital data using binary signals represented by 0s and 1s. Data is typically shipped in packets containing the data to be shared and control information such as source and destination addresses.

Networking protocols, such as TCP/IP, govern data communication over a network. These protocols specify the rules and conventions that devices must follow to communicate with each other.

In addition to the physical connections, networking in bits also involves the use of software and hardware components such as routers, switches, hubs, modems and network interfaces that are used to connect devices to the network, control the flow of data and provide security and manageability.

Networking in bits has become an essential part of modern communication and technology, allowing devices to connect and share data across various applications, including the internet, cloud computing, IoT and many others.

Cryptography in BIT

Cryptography in the context of bits refers to the practice of securing digital data by transforming it in such a way as to make it unreadable to unauthorized parties. Cryptography uses mathematical algorithms to encrypt and decrypt data, providing confidentiality, integrity and authenticity.

There are two main types of cryptography: symmetric and asymmetric.

Symmetric cryptography, also known as secret key cryptography, uses the same key for encryption and decryption. Examples of symmetric algorithms include AES, DES, and Blowfish.

Asymmetric cryptography, also known as public key cryptography, uses a pair of keys for encryption and decryption. The encryption key is public, while the decryption key is private. Examples of asymmetric algorithms include RSA, Elliptic Curve Cryptography (ECC), and Diffie-Hellman.

Cryptography in bits also includes the use of digital signatures and digital certificates. A digital signature is a way to ensure the authenticity and integrity of a message; it uses a private key to sign the message and a public key to verify the signature.

A digital certificate is a form of identity verification that uses a combination of public key cryptography and a trusted third party, such as a certificate authority (CA), to verify the certificate holder's identity.

Cryptography in bits plays a crucial role in many areas, such as secure communication, secure data storage, secure e-commerce, and secure access to cloud services. It helps to protect sensitive information and prevent unauthorized access to data, making it an essential aspect of modern digital systems.