Types of Parallel Computing

Introduction

Numerous calculations or activities can be run concurrently in a technique known as parallel computing. Often, big problems may be broken down into smaller ones that can be tackled simultaneously. There are various types of parallel computing, including task, data, instruction, and bit parallelism. Although parallelism has been used for a long time in high-performance computing, its use has become more popular because of the physical limitations that prohibit frequency scaling. Parallel computing, mostly multi-core processors, has taken centre stage in computer architecture as the worry about computer power consumption has grown recently.

Parallel and concurrent computing are closely related concepts that are commonly used interchangeably. A computational effort in parallel computing is usually divided into multiple, frequently numerous, extremely similar subtasks that can be executed separately and whose results are integrated when the task is finished. On the other hand, when related tasks are handled by different processes in concurrent computing as is usually the case in distributed computing the individual tasks may differ in kind and frequently necessitate some sort of inter-process communication while being executed.

Types of Parallelism

Bit-level parallelism

Between the 1970s and 1986, when large-scale integration (VLSI) computer-chip fabrication technology was developed, the amount of information that a processor could process in a cycle was doubled, leading to a speed increase in computer design. The processor has to run fewer instructions when operating on variables whose size exceeds the word length when the word size increases. An 8-bit processor needs two instructions to complete an operation, whereas a 16-bit processor can complete the same task with just one. For instance, if an 8-bit processor needs to add two 16-bit integers, it must first add the eight lower-order bits from each integer using the standard addition instruction and then add the eight higher-order bits using an add-with-carry instruction and the carry bit from the lower-order addition.

In the past, 8-bit, 16-bit, and 32-bit microprocessors replaced 4-bit microprocessors. The advent of 32-bit processors, the norm in general-purpose computing for the past 20 years, essentially ended this trend. 64-bit processors weren't widely used until the x86-64 architectures appeared in the early 2000s.

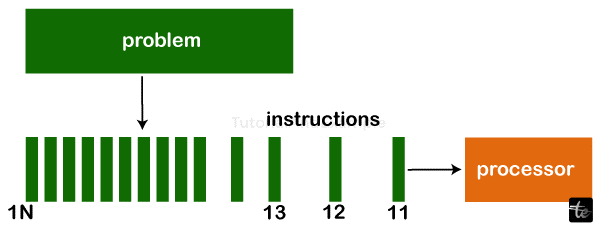

Instruction-level Parallelism

Each clock cycle phase can only have a maximum of one instruction addressed by a processor. These instructions can be grouped and rearranged to be run concurrently later on without changing the program's outcome. Instruction-level parallelism is the term for this.

Task Parallelism

Task Parallelism refers to a parallel program's ability to "perform entirely different calculations on either the same or different sets of data."In comparison, data parallelism involves doing the same calculation on either the same or distinct sets of data. The process of breaking down a task into smaller tasks and assigning each smaller task to a processor for execution is known as task parallelism. The processors would then carry out these subtasks concurrently, frequently in cooperation. Generally, task parallelism does not increase in complexity.

Superword level Parallelism

This vectorization method is based on fundamental block vectorization and loop unrolling. It can use the parallelism of inline code, which allows it to manipulate coordinates, colour channels, and manually unrolled loops, setting it apart from loop vectorization methods.

Task parallelism involves breaking down a task into smaller tasks and assigning a specific task to each smaller task. Subtasks are carried out by the processors concurrently.

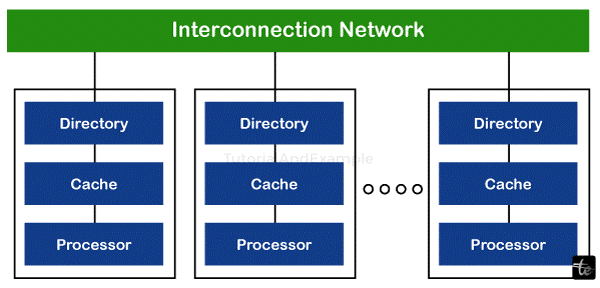

Fundamentals of Parallel Computer Architecture

Different types of parallel computers have different parallel computer architectures, categorised based on how much parallelism the hardware can support. To fully utilise these machines, programming approaches and parallel computer architecture collaborate. Among the parallel computer architecture classes are:

- Multi-core computing: Message queues, pure HTTP, and RPC-like connectors are used by distributed system components, spread over several networked computers, to coordinate their actions. Component concurrency and independent component failure are important features of distributed systems. Generally, there are four types of distributed programming architectures: client-server, three-tier, n-tier, and peer-to-peer. Parallel and distributed computing has much in common, and the phrases are occasionally used synonymously.

- Massively parallel computing: This is the process of doing a set of computations in parallel using many computers or computer processors. One method is to arrange multiple processors into a centralised, well-organized computer cluster. Grid computing is an additional strategy where many dispersed computers collaborate and exchange information over the Internet to address a specific issue.

Benefits of Parallel Computing

Parallel computing allows computers to run code more quickly, saving time and money by allowing for the faster sorting of "big data." In addition to providing additional resources, parallel programming may handle more complicated issues. This is beneficial for a variety of applications, from enhancing solar energy to altering the operations of the banking sector.

- Parallel computing simulates real-world scenarios: Our environment is not serial. Events don't occur one after the other, waiting for the conclusion of one before the beginning of the next. We need parallel computers to crunch calculations on data points in weather, transportation, banking, industry, agriculture, seas, ice caps, and healthcare.

- Saves time: Fast processors are forced to operate inefficiently by serial computing. It is like driving 20 oranges, one at a time, from Maine to Boston in a Ferrari. Regardless of the car's speed, combining the deliveries into a single trip is inefficient.

- Saves money: Parallel computing reduces costs by saving time. On a small scale, the more economical utilisation of resources might not appear significant. Yet, we witness enormous cost savings as we scale a system to billions of activities, such as bank software.

- Address bigger, more complicated issues: Computing is developing. A web application may handle millions of transactions per second with AI and big data. It will also take petaFLOPS of processing power to tackle "grand challenges" like economical solar energy or cyberspace security. With parallel processing, we'll arrive more quickly.

Limitations of Parallel Computing

- It tackles challenging issues, including synchronisation and communication between several subtasks and processes.

- The algorithms must be controlled so that a parallel mechanism can handle them.

- The programs or algorithms must be very cohesive and have low coupling. Such programs are hard to write; only highly qualified programmers with advanced technical skills can write a parallelism-based system efficiently.

Examples of Parallel Computing

You may be reading this article on a parallel computer, but let me tell you something: parallel computers have existed since the early 1960s. They are as compact as the low-cost Raspberry Pi and as strong as the largest supercomputer in the world, the Summit. Here are some instances of how parallel processing affects our daily lives.

- Smartphones: The 1.5 GHz dual-core processor in the iPhone 5 model. Six cores power the iPhone 11. There are 8 cores in the Samsung Galaxy Note 10. Each of these phones is an illustration of parallel computing.

- Desktops and laptops: Most contemporary computers are powered by Intel® processors, which are instances of parallel computing. Each Intel CoreTM i5 and Core i7 CPU in the HP Elitebook x360 and HP Spectre Folio has four processing cores. The world's most potent workstation, the HP Z8, boasts 56 cores of processing power, enabling it to do intricate 3D simulations and edit videos in real-time in 8K resolution.

- Illinois IV: Constructed primarily at the University of Illinois, this was the first "massively" parallel computer. The United States Air Force and NASA assisted in developing the device throughout the 1960s. With 64 processing elements, it could process up to 131,072 bits at once.

- The computer system of the NASA space shuttle: Five IBM AP-101 computers are used in tandem for the space shuttle programme. They manage the spacecraft's avionics, handling rapidly changing real-time data. The computers have a 480,000 instruction per second processing speed. The B-1 bomber and F-15 fighter jets both employ the same mechanism.

- Supercomputer Summit American: The American Summit is the most potent supercomputer on the planet. The apparatus was constructed at Oak Ridge National Laboratory by the United States Department of Energy. With 200 petaflops of processing power, the computer can handle 200 quadrillion operations in a second. The work that Peak can complete in a single second would take ten months if every person on the planet performed one computation every second. With a weight of 340 tonnes, the machine uses 4,000 gallons of water every minute for cooling. Scientists are using it to create new materials to improve our quality of life and better comprehend physics, weather, earthquakes, and genomes.

- IoT, or the Internet of Things: With almost 50 billion sensors and 20 billion gadgets, our everyday data flow is unrestricted. The volume of real-time telemetry data from the Internet of Things (IoT) overwhelms traditional computing systems, from pressure sensors to smart automobiles, drones, and soil sensors.