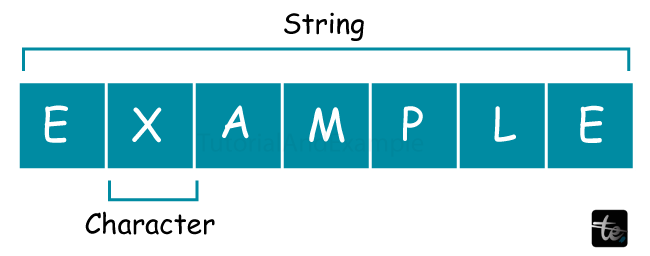

What is a String?

Traditionally, a string in computer programming has been a sequence of characters used as a literal constant or as a form of variable. The latter may be fixed (after construction) or permit its pieces to change and its length to be varied. Generally speaking, a string is a data type. It is commonly implemented as an array data structure comprising bytes (or words) that employs some character encoding to store a succession of components, usually characters. Strings can represent other sequence data types, such as arrays or lists.

A variable stated as a string may leverage dynamic allocation to hold a variable number of items or statically allocate memory for a preset maximum length, depending on the programming language and individual data type used.

An anonymous string, or string literal, is a string that appears directly in source code.

A string in formal languages, which is used in theoretical computer science and mathematical logic, is a finite succession of symbols taken from a collection known as an alphabet.

Similar to an integer and floating-point unit, a string is also considered a data type used in programming that is used to represent text instead of numbers. It is made up of a set of characters, some of which may also contain numerals and spaces. For instance, both the line "I ate 3 hamburgers" and the word "hamburger" are strings. If input correctly, even "12345" may be viewed as a string. Programmers frequently need to encapsulate strings in quotation marks in order for the data to be recognized as a string rather than a variable name or number.

Example:

If Option 1 and Option 2 are equal, then...

Options 1 and 2 could be variables containing strings, integers, or other forms of data. The test produces a true result if the values are equal; otherwise, a false result is produced. In the analogy:

If "Option1" and "Option2" are equal, then...

We are considering Option 1 and Option 2 as strings. Consequently, the test compares the phrases "Option1" and "Option2," and the latter would yield a false result. Using the null character is a typical way of calculating the length of a string.

Use of Strings

Strings are usually used to store text that people, such as words and phrases can read. Information is communicated between a computer program and its user via strings. A user may also insert characters into a program. Furthermore, content defined as characters but not designed for human reading may be kept as strings.

Examples:

- The software shows a string to end users, such as "file upload complete." This message would appear as a string literal in the program's source code.

- User-entered status updates on social networking sites, such as "I got a new job today." The application would generally store this string in a database rather than as a string literal.

- Alphabetic data showing DNA nucleic acid sequences, such as "AGATGCCGT."

- Examples of computer parameters or settings include "?action=edit" in a URL query string. These are largely used to interface with machines. However, they typically contain some human-readable text as well.

The word "string" refers to strings of characters when used without qualification, but it may also refer to a succession of data or computer records other than characters, such as a "string of bits."

Length of String

Although structured strings may have a definite length, in many cases, they limit the size of a string to an imaginary maximum in a given language. Furthermore, strings can be classified into two categories: strings of variable size, which do not have an arbitrary finite size and may use different amounts of storage at runtime depending on the specific parameters, and strings of specified length, which have a defined maximum size to be calculated at the time of compilation and which use a similar amount of storage space, whether or not this maximum is required. In other programming languages, sequences are mostly composed of strings with variable lengths. However the quantity of useable RAM capacity, for instance, limits the size of variable-length strings. The length of the string may be interpreted as an additional integer number, which might impose an additional arbitrary limit on the size, or it can be inferred as a revocation character, which is typically a character quality that has all null bits, as in the computer language C.

Encoding of Characters

Characters in string datatypes have historically been allotted one byte each. Even so, character implementations were similar enough to stop developers from ignoring this, even though the actual character set varied by province. This is because a program's specially prepared characters, like time frame, storage, and comma, were placed similarly in all Unicode characters that a project would encounter. These character sequences are often based on EBCDIC or ASCII. When a message was shown on a system with many encryptions in one processing, it was often distorted but still fairly readable. A few online users managed to decipher the altered wording.

Unicode has a rather compressed picture. Unicode string datatypes are now available for many additional computer languages. The selected Unicode UTF-8 byte source model aims to avoid the previously described problems with older multibyte Unicode characters. The developer may recognize that the application divisions of pre-defined length are different from the "characters" thanks to UTF-8, UTF-16, and UTF-32; the primary issue is that shoddy APIs obscure this difference.

Execution

Mutable strings are strings whose component elements may be modified after they are formed; examples of such languages are Ruby and C++. In other languages, like Python and Java, the value is set, and any alterations require constructing a new string. These are referred to as permanent sequences (several of these languages, including Java and.NET StringBuilder, the thread-safe Java String Buffer, and the Cocoa NS Mutable String, also provide another mutable type). In order to offer quick access to separate groups or substrings, strings are typically created as sequences of bytes, characters, or code items (with the exception of characters that have a set size). On the other hand, some languages, such as Haskell, incorporate them as relational databases.

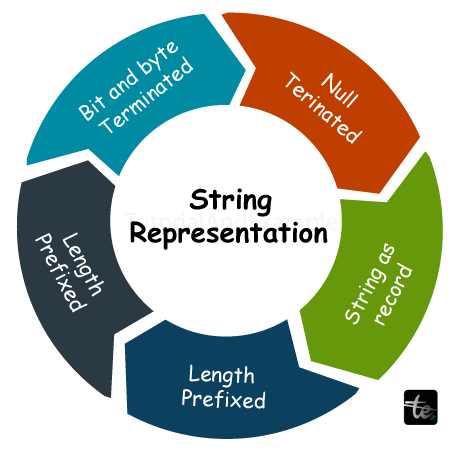

String Representation

The availability of the text catalog and the typical cryptography system are necessary for string representations. Previous string implementations were built to function with encryption and a catalog given in ASCII or with more current advancements like the ISO (International Organizations for Standardizations) 8859 sequence. Advanced functions generally demand the comprehensive accompaniment of Unicode combined with a range of essential embeddings, such as UTF-8 and UTF-16.

Rather than strings of merely (readable) words, strings of sections, or anything similar, the phrase "byte string" generally refers to a sequence of bytes for particular reasons. It is inferred that neither value may be treated as a termination value by byte strings, which also implies that bytes may absorb any input and, hence, keep any information.

Strings apply particular implementations to variable-length sequences, where the inputs are text codes saved for the appropriate strings. The crucial difference is that, in the array of some encryptions, a single rational text may occupy up to one entry.

It is present, for example, with UTF-8 since individual codes (UCS code assists) have a maximum byte size of four. For unique characters, there are a limitless number of potential values. In some circumstances, the string sequence (number of bits) varies from the actual line size (where the number of bytes is being employed). UTF-32 circumvents the first portion of the issue.

Features of Strings

Strings possess the following features when it comes to Data Structures and Algorithms:

- Ordered: Strings are character sequences that are structured in an ordered form, with each character having a specified location within the string.

- Indexable: Strings are indexable, which implies that a numerical index may be used to obtain particular characters within a string.

- Comparable: The relative order or equality of strings may be established by comparing them to one another.

Applications of Strings

Strings are widely utilized in computer science and have a vast variety of applications in numerous disciplines, including

- Text Processing: In word processors, text editors, and other text-related applications, strings are used to represent and change text data.

- Pattern Matching: To extract or process data in a specific way, strings may be searched for patterns, such as regular expressions or specified substrings.

- Data compression: You may use data compression to save strings on less storage space. Data compression applications commonly utilize string compression techniques like run-length encoding and Huffman coding.

String in C

The C Language's String Definition Syntax is

char string_variable_name [array_size];

string c[] = "Hello World"

How Can a String Be Declared in C:

In the C language, there are two methods for declaring a string.

- Through a char array

char hello[6] = {'W', 'o', 'r', 'l', 'd', '\0'};

- Using string literal

char hello = "World";

Java programming's representation of strings

Character sequences can be used to preserve strings, but Java is a specialized programming language, and its authors have made an attempt to integrate more advanced capabilities. Java supports strings as a pre-defined data type, exactly like all other data types. It indicates that instead of specifying strings as a text sequence, you may define them explicitly.

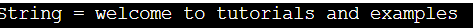

Example:

Public class Main

{

public static void main(String []args)

{

String str = new String("welcome to tutorials and examples");

System.out.println( "String = " + str );

}

}

Output:

Python's Symbolization of Strings

In Python programming, creating and displaying strings is as simple as allocating a Python variable sequence with single or double quotes.

Example:

variable2 = " Welcome to tutorials and examples "

print ("string =",variable2)

Output:

String Advantages

The fact that strings are a fundamental data type in the majority of computer languages provides them the benefit of being broadly supported and readily accessible.

- Effective Manipulation: A range of approaches, including string matching algorithms, string compression techniques, and data structures like attempts and suffix arrays, have been designed to manipulate strings effectively.

- Ability to represent Real-World Data: Strings are a handy tool in many applications because they can be used to represent real-world data, including names, addresses, and other text data types.

- Text mining and natural language processing: Text mining and natural language processing technologies, like sentiment analysis and named entity recognition, require strings as input.

String Disadvantages:

- Encoding Issues: Different encodings for strings, like UTF-8 or UTF-16, could lead to compatibility concerns when processing strings from diverse sources.

- Immutable: Strings are typically implemented as data structures that are unchangeable once they are formed or immutable strings. Because new strings need to be created for each update, this could result in unnecessary overhead while handling strings.

- Concatenating strings: Concatenating strings may be difficult since it entails the generation of a new string and the copying of every character from the source strings into it.

Conclusion

There are times when strings resemble other data types. But bear in mind that even if a number looks to be a number or a boolean seems to be a boolean, you should always double-check that it's not a string to prevent using it erroneously!